commit

dc67edd35a

35 changed files with 83051 additions and 0 deletions

Split View

Diff Options

-

+75 -0README.md

-

BINdocs/CC_data_size_stat.png

-

BINdocs/CC_domain.png

-

BINdocs/CC_language.png

-

BINdocs/CC_total_size.png

-

BINdocs/个人微信.jpg

-

BINdocs/交流群00.jpg

-

BINsrc/.DS_Store

-

+118 -0src/1-FilterLanguage/languageExtract.py

-

BINsrc/3-JunkDataFilter/.DS_Store

-

+189 -0src/3-JunkDataFilter/Filter_run.py

-

+21 -0src/3-JunkDataFilter/LICENSE

-

BINsrc/3-JunkDataFilter/models/.DS_Store

-

+95 -0src/3-JunkDataFilter/models/FastText.py

-

BINsrc/3-JunkDataFilter/models/__pycache__/FastText.cpython-36.pyc

-

+24 -0src/3-JunkDataFilter/sensitive-words/new-words.txt

-

+14600 -0src/3-JunkDataFilter/sensitive-words/sensitive_words_v1.txt

-

+16326 -0src/3-JunkDataFilter/sensitive-words/total.txt

-

+16367 -0src/3-JunkDataFilter/sensitive-words/total备份.txt

-

+724 -0src/3-JunkDataFilter/sensitive-words/yellow_words-v2.txt

-

+1242 -0src/3-JunkDataFilter/sensitive-words/yellow_words.txt

-

+560 -0src/3-JunkDataFilter/sensitive-words/反动词库.txt

-

+123 -0src/3-JunkDataFilter/sensitive-words/广告.txt

-

+326 -0src/3-JunkDataFilter/sensitive-words/政治类.txt

-

+14600 -0src/3-JunkDataFilter/sensitive-words/敏感词.txt

-

+178 -0src/3-JunkDataFilter/sensitive-words/暴恐词库.txt

-

+569 -0src/3-JunkDataFilter/sensitive-words/民生词库.txt

-

+437 -0src/3-JunkDataFilter/sensitive-words/涉枪涉爆违法信息关键词.txt

-

+14595 -0src/3-JunkDataFilter/sensitive-words/网址/网址.txt

-

+1242 -0src/3-JunkDataFilter/sensitive-words/色情词库.txt

-

+40 -0src/3-JunkDataFilter/sensitiveWords_test.py

-

+119 -0src/3-JunkDataFilter/train_eval.py

-

+91 -0src/3-JunkDataFilter/trie_tree_match.py

-

+155 -0src/3-JunkDataFilter/utils.py

-

+235 -0src/3-JunkDataFilter/utils_fasttext.py

+ 75

- 0

README.md

View File

| @@ -0,0 +1,75 @@ | |||

| # DataCollector | |||

| DataCollector项目主要介绍NLP预训练模型训练数据集资源、数据清洗过滤方法。 | |||

| <!-- [[1.数据集资源](#数据集资源)] --> | |||

| [[网页数据介绍及清洗过滤方法](#网页数据介绍及清洗过滤方法)] | |||

| - Common Crawl介绍 | |||

| - Common Crawl数据格式 | |||

| <!-- - (2)Common Crawl数据统计 --> | |||

| <!-- - (3)已有基于Common Crawl数据的研究工作 --> | |||

| - 基于common crawl WET格式原始数据清洗过滤方法 | |||

| - (1)不同语言数据分类过滤 | |||

| - (2)基于规则的过滤 | |||

| - (3)基于分类模型的垃圾数据过滤 | |||

| - (4)数据去重方法 | |||

| [[加入鹏程·PanGu-α微信交流群](#微信交流群)] | |||

| <!-- | |||

| # 数据集资源 | |||

| | 序号 | 数据集 | 数据集大小 |数据集说明| | |||

| | :-------------- | :---- | :------------------------------------------------------------ |:-------------------- | | |||

| | 1 | CLUECorpus2020 |100G |来自CLUE官方搜集 | | |||

| | 2 | CLUECorpus2020-14G |14G |来自wiki_zh_2019、webtext2019zh、new2016zh、comments2019四个开放数据集的合集 | | |||

| | 3 | Sogou-CA |3.2G |搜狗新闻语料数据集 | | |||

| | 4 | THUCNews |7.2G |清华新闻数据,包括14个类别:体育、娱乐、家居、彩票、房产、教育、时尚、时政、星座、游戏、社会、科技、股票、财经 | --> | |||

| # 网页数据介绍及清洗过滤方法 | |||

| ## Common Crawl介绍 | |||

| ### Common Crawl数据格式 | |||

| >Common Crawl网站提供了包含上百亿网页数据的免费数据库,并希望这项服务能激发更多新的研究或在线服务。Common Crawl原始数据包括三种格式:WARC、WAT、WET。 | |||

| > | |||

| >>WARC:WARC格式是抓取的原始数据,提供了到抓取过程的直接映射。该格式不仅存储了它联系的网站的HTTP响应(WARC-Type: response),它还存储了关于该信息是如何被请求的信息(WARC-Type: request)和抓取过程本身的元数据(WARC-Type: metadata)。 | |||

| >>WAT:WAT格式以JSON格式存储, WAT文件包含关于上面以WARC格式存储的记录的重要元数据。该元数据是为这三种类型的记录(元数据、请求和响应)中的每一种计算的。如果爬行的信息是HTML,计算的元数据包括返回的HTTP头和页面上列出的链接(包括链接的类型)。 | |||

| >>WET:由于许多任务只需要文本信息,普通抓取数据集提供了只包含提取的明文的湿文件。以WET格式存储文本数据的方法非常简单。WARC元数据包含各种细节,包括URL和明文数据的长度,明文数据紧随其后。 | |||

| <!-- ### (2)Common Crawl数据统计 | |||

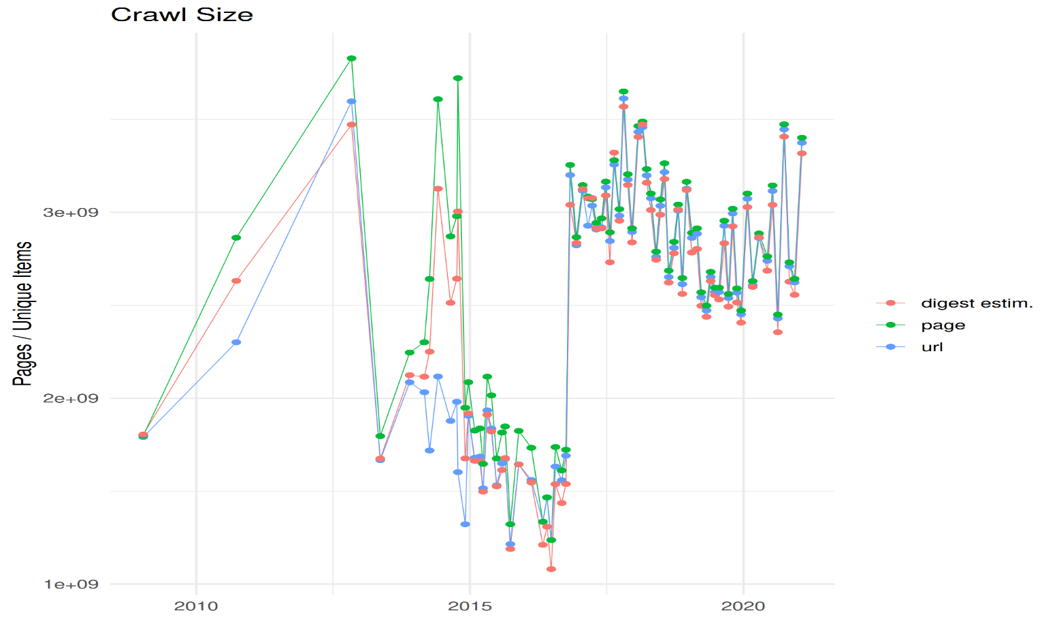

| >Common crawl针对原始网页数据进行了多个维度的分析统计工作,相关的统计分析参考[cc-crawl-statistics](https://commoncrawl.github.io/cc-crawl-statistics/)网站,如: | |||

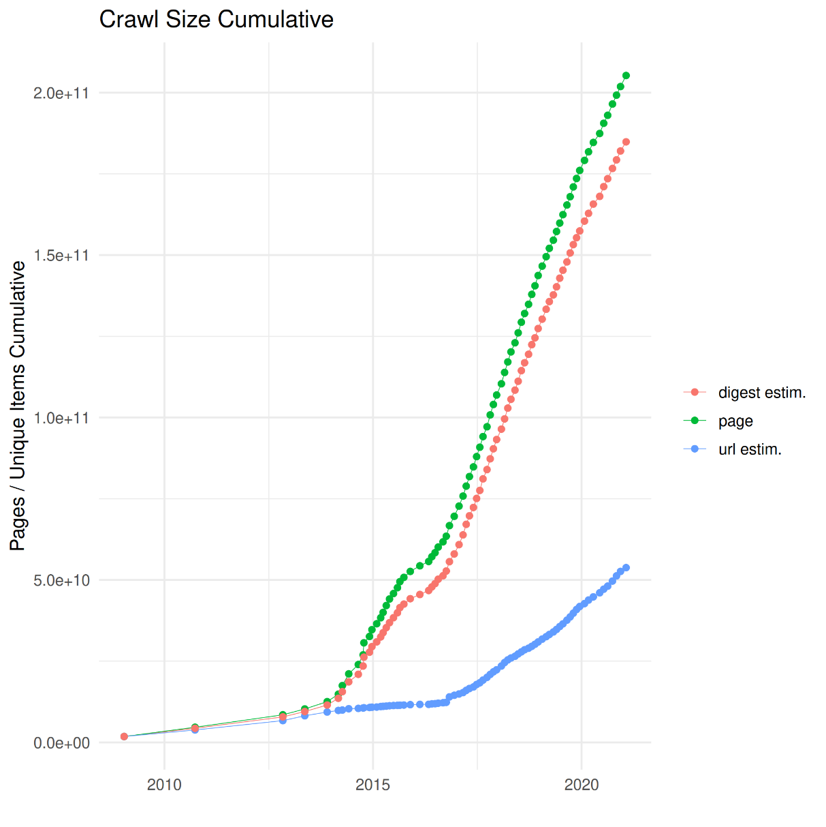

| 截止2020年底累积网页数统计如下图,当目前已经累积约200亿网页。 | |||

| <img src="./docs/CC_total_size.png" width="550" height="400"/><br/> | |||

| 每月发布的爬取页面数量统计图: | |||

| <img src="./docs/CC_data_size_stat.png" width="550" height="400"/><br/> | |||

| Common Crawl网页中域名分布图: | |||

| <img src="./docs/CC_domain.png" width="550" height="400"/><br/> | |||

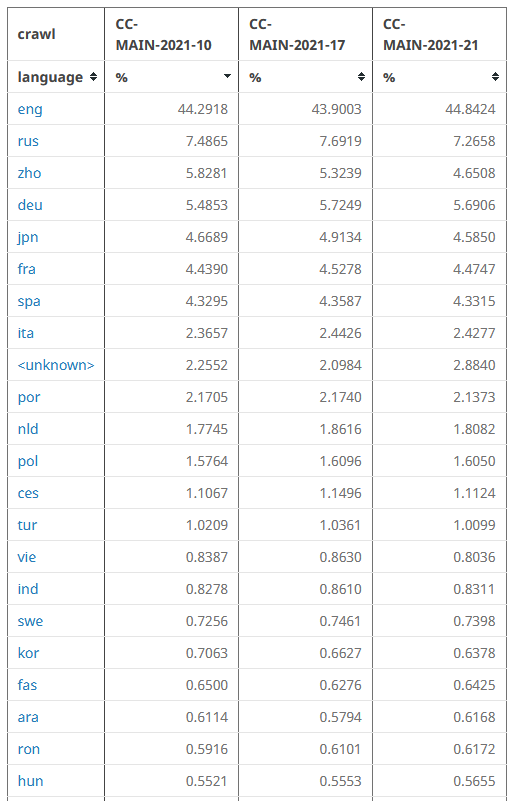

| Common Crawl数据中各种语言比例统计表(部分): | |||

| <img src="./docs/CC_language.png" width="450" height="600"/><br/> | |||

| ### (3)已有基于Common Crawl数据的研究工作 | |||

| 目前已经有相当多的基于common crawl数据进行数据抽取、算法研究等相关工作: | |||

| - [Extracting Job Ads from Common Crawl](https://skeptric.com/common-crawl-job-ads/) | |||

| - [构建大规模新闻数据集](https://doi.org/10.1145/3340531.3412762) | |||

| - [多语言语料构建](https://arxiv.org/abs/2104.08758) | |||

| - [More](https://commoncrawl.org/the-data/examples/) --> | |||

| ## 基于common crawl WET格式原始数据清洗过滤方法 | |||

| ### 不同语言数据分类过滤 | |||

| 通过不同语言的Unicode编码范围可以快速过滤出相应语言的网页,[languageExtract.py](./src/languageExtract.py)提供了同时抽取多种语言单语言语料的工具。在实际数据抽取中可能还需要同时考虑原始数据的编码格式、网页结构等因素,构建网页黑白名单等方法提高数据抽取效率和数据的质量。除此之外也可以考虑采用文本分类模型等方式进行过滤。 | |||

| ### 基于规则的过滤 | |||

| Common Crawl数据包含较多各种类型的垃圾,如特殊符号、广告、网页标题,通过数据特点构建相应的数据清洗规则往往能比较好的提高数据质量。 | |||

| ### 基于分类模型的垃圾数据过滤 | |||

| 通过上述两个步骤形成的文本数据往往仍然包含大量敏感、黄色、广告等信息,我们通过基于[Fasttext](./src/3-JunkDataFilter)的文本分类模型、关键词等方法对于敏感、黄色、广告等文本信息进行了过滤。 | |||

| ### 大规模数据去重方法 | |||

| 网页内部、不同网页之间均存在文本完全重复的情形,基于Hadoop+Spark平台采用[HashTF+MiniHashLSH](./src/)算法对数据在段落粒度做了文本去重。 | |||

| ## 微信交流群 | |||

| 添加微信入鹏程.盘古α交流群:<img src="./docs/个人微信.jpg" width="270"/><br/> | |||

| <img src="./docs/交流群00.jpg" width="270"/><br/> | |||

BIN

docs/CC_data_size_stat.png

View File

BIN

docs/CC_domain.png

View File

BIN

docs/CC_language.png

View File

BIN

docs/CC_total_size.png

View File

BIN

docs/个人微信.jpg

View File

BIN

docs/交流群00.jpg

View File

BIN

src/.DS_Store

View File

+ 118

- 0

src/1-FilterLanguage/languageExtract.py

View File

| @@ -0,0 +1,118 @@ | |||

| import glob | |||

| import re | |||

| import multiprocessing | |||

| import gzip | |||

| import os | |||

| import copy | |||

| def read_gz_file(path): | |||

| if os.path.exists(path): | |||

| with gzip.open(path, 'r') as pf: | |||

| for line in pf: | |||

| yield line | |||

| else: | |||

| print('the path [{}] is not exist!'.format(path)) | |||

| def process_oneFile(path_input, root_path_save,language_uni_code_dict,lang_rule_str): | |||

| total_rule_str=lang_rule_str | |||

| total_grop = re.compile(total_rule_str) | |||

| real_run_language_uni_code_dict=copy.deepcopy(language_uni_code_dict) | |||

| for key in language_uni_code_dict: | |||

| file_path_save = root_path_save + key + "/"+ path_input.split("/")[-2] + "-" + path_input.split("/")[-1] + ".txt" | |||

| if os.path.exists(file_path_save): | |||

| print("file has already cleaned:", file_path_save) | |||

| real_run_language_uni_code_dict.pop(key) | |||

| #open save file | |||

| save_texts_dict={} | |||

| for key in real_run_language_uni_code_dict: | |||

| # print(key) | |||

| texts=[] | |||

| save_texts_dict[key]=texts | |||

| with gzip.open(path_input, 'rt') as f: | |||

| lines = f.readlines() | |||

| for line in lines: | |||

| if line =='\n': | |||

| for key in real_run_language_uni_code_dict: | |||

| temp_text_list = save_texts_dict[key] | |||

| temp_text_list.append(line) | |||

| continue | |||

| if line: | |||

| total_res='' | |||

| total_res_str = total_res.join(total_grop.findall(line)) | |||

| rate = len(total_res_str) / len(line) | |||

| if rate < 0.2: | |||

| continue | |||

| for key,value in real_run_language_uni_code_dict.items(): | |||

| grop = re.compile(value) | |||

| res='' | |||

| all_str=res.join(grop.findall(line)) | |||

| rate=len(all_str)/len(line) | |||

| if rate>0.2 and len(line)>30: | |||

| temp_text_list=save_texts_dict[key] | |||

| temp_text_list.append(line) | |||

| for key in real_run_language_uni_code_dict: | |||

| # print(key) | |||

| file_path_save=root_path_save+key+"/"+path_input.split("/")[-2]+"-"+path_input.split("/")[-1]+".txt" | |||

| if not os.path.exists(root_path_save+key+"/"): | |||

| os.makedirs(root_path_save+key+"/") | |||

| file_w = open(file_path_save, 'w', encoding='utf-8') | |||

| write_texts=save_texts_dict[key] | |||

| fore_line='' | |||

| for index in range(len(write_texts)): | |||

| line=write_texts[index] | |||

| if fore_line=='\n' and line=='\n': | |||

| continue | |||

| file_w.write(line) | |||

| fore_line=line | |||

| file_w.close() | |||

| if __name__ == '__main__': | |||

| #input data path and output path | |||

| paths_list = ["/gdata/commonCrawl/common-crawl-WET-20201124-v2-ori/*/*.warc.wet.gz"] | |||

| output_root_paths = "/gdata/commonCrawl/multi-lingual-ethnic/" | |||

| #different language unicode config | |||

| language_uni_code_dict = {'Tangut': '[\u17000-\u187FF]', | |||

| 'Miao': '[\u16F00-\u16F9F]', | |||

| 'Lisu': '[\uA4D0-\uA4FF]', | |||

| 'Yi': '[\uA000-\uA4CF]', | |||

| 'Devanagari': '[\u0900-\u097F]'} | |||

| total_rule_str = '[\u17000-\u187FF' \ | |||

| '\u16F00-\u16F9F' \ | |||

| '\uA4D0-\uA4FF' \ | |||

| '\uA000-\uA4CF' \ | |||

| '\u0900-\u097F]' | |||

| #get input files | |||

| original_file_paths = [] | |||

| for path in paths_list: | |||

| original_file_paths.extend(list(glob.glob(path))) | |||

| print(path) | |||

| #prepare input file and output file | |||

| all_input_file_paths=[] | |||

| save_file_paths=[] | |||

| file_num=0 | |||

| for file_path in original_file_paths: | |||

| file_num+=1 | |||

| all_input_file_paths.append(file_path) | |||

| save_file_paths.append(output_root_paths) | |||

| print("file num:",file_num) | |||

| #extract different lanuage data | |||

| num_processes = 300 | |||

| pool = multiprocessing.Pool(processes = num_processes) | |||

| for input_file,save_path in zip(all_input_file_paths,save_file_paths): | |||

| pool.apply_async(process_oneFile, (input_file,save_path,)) | |||

| pool.close() | |||

| pool.join() | |||

BIN

src/3-JunkDataFilter/.DS_Store

View File

+ 189

- 0

src/3-JunkDataFilter/Filter_run.py

View File

| @@ -0,0 +1,189 @@ | |||

| # coding: UTF-8 | |||

| import time | |||

| import os | |||

| import torch | |||

| import numpy as np | |||

| from tqdm import tqdm | |||

| from train_eval import train, init_network | |||

| from importlib import import_module | |||

| import argparse | |||

| import torch.nn.functional as F | |||

| from utils_fasttext import build_dataset, build_iterator, get_time_dif, build_pre_dataset | |||

| import jieba | |||

| import pickle as pkl | |||

| from trie_tree_match import Trie_tree | |||

| import random | |||

| import glob | |||

| parser = argparse.ArgumentParser(description='Chinese Text Classification') | |||

| parser.add_argument('--model', type=str, default='FastText', help='') | |||

| parser.add_argument('--model_path', type=str, default='ckpt-v1/saved_dict/FastText.ckpt', help='') | |||

| parser.add_argument('--embedding', default='pre_trained', type=str, help='random or pre_trained') | |||

| parser.add_argument('--word', default=False, type=bool, help='True for word, False for char') | |||

| parser.add_argument('--Mode', default="predict", type=str, help='train/predict') | |||

| parser.add_argument('--predict_path', default="/workspace/input_data_path/", type=str, help='使用绝对路径') | |||

| parser.add_argument('--predict_result_save_path', default="/workspace/output_data_path/", type=str, help='清洗结果保存路径,使用绝对路径') | |||

| parser.add_argument('--device_id', default="0", type=str, help='GPU ID') | |||

| args = parser.parse_args() | |||

| os.environ["CUDA_VISIBLE_DEVICES"] = args.device_id#'0' | |||

| def predict(config, model, predict_iter): | |||

| predict_all = [] | |||

| probility_all = [] | |||

| with torch.no_grad(): | |||

| for texts, _ in predict_iter: | |||

| # print("text:",len(texts)) | |||

| outputs = model(texts) | |||

| probilitys = F.softmax(outputs) | |||

| p_batch = torch.max(probilitys.data, 1)[0].cpu().numpy() | |||

| predict_batch = torch.max(probilitys.data, 1)[1].cpu().numpy() | |||

| predict_all.extend(predict_batch) | |||

| probility_all.extend(p_batch) | |||

| # print("*****batch probility length:",len(p_batch)) | |||

| return predict_all, probility_all | |||

| def prepare_vocab(): | |||

| vocab_dir = "敏感词库" | |||

| text_names = [x for x in os.listdir(vocab_dir) if x.endswith(".txt")] | |||

| vocabs_ = [] | |||

| for txt_name in text_names: | |||

| with open(f"{vocab_dir}/{txt_name}",encoding="utf-8") as f: | |||

| vocabs = f.read().splitlines() | |||

| vocabs_.extend(vocabs) | |||

| vocabs_=list(set(vocabs_)) | |||

| vocabs_.sort() | |||

| with open("敏感词库/total.txt","w",encoding="utf-8") as w: | |||

| w.write("\n".join(vocabs_)) | |||

| def load_vocab(path = "敏感词库/色情词库.txt"): | |||

| with open(path,encoding="utf-8") as f: | |||

| vocabs = f.read().splitlines() | |||

| return vocabs | |||

| def filter_by_trie(text,trie_model,threhold = 3): | |||

| """ | |||

| 0:dirty | |||

| 1:clean | |||

| """ | |||

| res = trie_model.find_one(text) | |||

| if len(res)>threhold: | |||

| return 0 | |||

| elif len(res)>=1 and len(text)<200: | |||

| return 0 | |||

| else: | |||

| return 1 | |||

| if __name__ == '__main__': | |||

| # prepare_vocab() | |||

| dirty_vocab = load_vocab("./敏感词库/色情词库.txt") | |||

| trie_model = Trie_tree() | |||

| trie_model.load_vocab(dirty_vocab) | |||

| # jieba.load_userdict(dirty_vocab) | |||

| dataset = 'filter-v3-length200' # 数据集 | |||

| model_name = args.model # FastText | |||

| x = import_module('models.' + model_name) | |||

| config = x.Config(dataset, embedding='random') | |||

| config.save_path = args.model_path | |||

| np.random.seed(1) | |||

| torch.manual_seed(1) | |||

| torch.cuda.manual_seed_all(1) | |||

| torch.backends.cudnn.deterministic = True # 保证每次结果一样 | |||

| ## 设置预测路径 | |||

| # text_paths = [x for x in os.listdir(args.predict_path) if x.endswith(".txt")] | |||

| text_paths=[] | |||

| data_path_glob = args.predict_path+'part-*' | |||

| text_paths.extend(list(glob.glob(data_path_glob))) | |||

| #预测结果路径 | |||

| res_dir = args.predict_result_save_path | |||

| if not os.path.exists(res_dir): | |||

| os.mkdir(res_dir) | |||

| total_path_num=0 | |||

| total_time=0 | |||

| #加载词表 | |||

| if os.path.exists(config.vocab_path): | |||

| vocab = pkl.load(open(config.vocab_path, 'rb')) | |||

| else: | |||

| vocab = build_vocab(config.train_path, tokenizer=tokenizer, max_size=MAX_VOCAB_SIZE, min_freq=1) | |||

| pkl.dump(vocab, open(config.vocab_path, 'wb')) | |||

| # 加载模型 | |||

| config.n_vocab = len(vocab) | |||

| model = x.Model(config).to(config.device) | |||

| print(config.device) | |||

| model.load_state_dict(torch.load(config.save_path)) | |||

| model.eval() | |||

| random.shuffle(text_paths) | |||

| for i in range(len(text_paths)): | |||

| text_path=text_paths[i] | |||

| try: | |||

| start_time=time.clock() | |||

| total_path_num+=1 | |||

| # dirty_file_name = text_path.replace(".txt", "_dirty.txt") | |||

| # dirty_file_path = f"{res_dir}/{dirty_file_name}" | |||

| clean_file_path=args.predict_result_save_path +"train/"+ text_path.split('/')[-1]+'-clean.txt' | |||

| dirty_file_path=args.predict_result_save_path + "dirty/"+text_path.split('/')[-1] + '-dirty.txt' | |||

| if os.path.exists(clean_file_path): | |||

| print("file has already cleaned:",text_path) | |||

| continue | |||

| if os.path.exists(dirty_file_path): | |||

| continue | |||

| config.predict_path = text_path #f"{args.predict_path}/{text_path}" | |||

| ## 预测 | |||

| predict_data, all_text = build_pre_dataset(config, args.word,vocab) | |||

| # print("-----predict_data length:",len(predict_data)) | |||

| if len(all_text)==0: | |||

| continue | |||

| predict_iter = build_iterator(predict_data, config) | |||

| predict_all,probility_all=predict(config, model, predict_iter) | |||

| label2str = {0: "【垃圾】", 1: "正常文本"} | |||

| index_line = 0 | |||

| clean_file=open(clean_file_path,"w",encoding="utf-8") | |||

| dirty_file=open(dirty_file_path,"w",encoding="utf-8") | |||

| for index_line in range(len(all_text)): | |||

| text=all_text[index_line] | |||

| if text: | |||

| p=probility_all[index_line] | |||

| label = predict_all[index_line] | |||

| label_trie = filter_by_trie(text, trie_model,3) | |||

| if label == 0 and p>0.8 and text!="\n": | |||

| dirty_file.writelines("<<score:"+str(p)+">>\n"+text) | |||

| dirty_file.writelines("\n\n") | |||

| elif label_trie == 0: | |||

| dirty_file.writelines("by trie-tree match:"+text) | |||

| dirty_file.writelines("\n\n") | |||

| else: | |||

| clean_file.writelines(text) | |||

| # clean_file.writelines("<<score:"+str(p)+">>\n"+text) | |||

| clean_file.writelines("\n\n") | |||

| # print("-----------one file spend time:",time.clock()-start_time) | |||

| total_time+=(time.clock()-start_time) | |||

| if total_path_num%5==0: | |||

| print("text path:",text_path) | |||

| print("predict result lengith:",len(probility_all)) | |||

| print("file text lengith:",len(all_text)) | |||

| print("current file num:",total_path_num," all num:",len(text_paths)) | |||

| print("-----------one file average time:",total_time/total_path_num) | |||

| # print("-----------one sample spend time:",(time.clock()-start_time)/len(all_text),"samples:",len(all_text)) | |||

| except: | |||

| print("the exception occured in this txt file:",text_path) | |||

| continue | |||

| print("-----------total time:",total_time) | |||

| print("-----------total num:",total_path_num) | |||

+ 21

- 0

src/3-JunkDataFilter/LICENSE

View File

| @@ -0,0 +1,21 @@ | |||

| MIT License | |||

| Copyright (c) 2019 huwenxing | |||

| Permission is hereby granted, free of charge, to any person obtaining a copy | |||

| of this software and associated documentation files (the "Software"), to deal | |||

| in the Software without restriction, including without limitation the rights | |||

| to use, copy, modify, merge, publish, distribute, sublicense, and/or sell | |||

| copies of the Software, and to permit persons to whom the Software is | |||

| furnished to do so, subject to the following conditions: | |||

| The above copyright notice and this permission notice shall be included in all | |||

| copies or substantial portions of the Software. | |||

| THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR | |||

| IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY, | |||

| FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE | |||

| AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER | |||

| LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM, | |||

| OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE | |||

| SOFTWARE. | |||

BIN

src/3-JunkDataFilter/models/.DS_Store

View File

+ 95

- 0

src/3-JunkDataFilter/models/FastText.py

View File

| @@ -0,0 +1,95 @@ | |||

| # coding: UTF-8 | |||

| import torch | |||

| import torch.nn as nn | |||

| import torch.nn.functional as F | |||

| import numpy as np | |||

| class Config(object): | |||

| """配置参数""" | |||

| def __init__(self, dataset, embedding): | |||

| self.model_name = 'FastText' | |||

| self.train_path = dataset + '/data/train.txt' # 训练集 | |||

| self.dev_path = dataset + '/data/dev.txt' # 验证集 | |||

| self.test_path = dataset + '/data/test.txt' # 测试集 | |||

| self.predict_path = None # 预测 | |||

| self.class_list = [x.strip() for x in open( | |||

| dataset + '/data/class.txt', encoding='utf-8').readlines()] # 类别名单 | |||

| self.vocab_path = dataset + '/data/vocab.pkl' # 词表 | |||

| self.save_path = dataset + '/saved_dict/' + self.model_name + '.ckpt' # 模型训练结果 | |||

| self.log_path = dataset + '/log/' + self.model_name | |||

| self.embedding_pretrained = torch.tensor( | |||

| np.load(dataset + '/data/' + embedding)["embeddings"].astype('float32'))\ | |||

| if embedding != 'random' else None # 预训练词向量 | |||

| self.device = torch.device("cuda" if torch.cuda.is_available() else 'cpu') # 设备 | |||

| #self.device = torch.device('cpu') # 设备 | |||

| self.weight_decay = 1e-5 # L2正则系数 | |||

| self.dropout = 0.5 # 随机失活 | |||

| self.require_improvement = 1000 # 若超过1000batch效果还没提升,则提前结束训练 | |||

| self.num_classes = len(self.class_list) # 类别数 | |||

| self.n_vocab = 0 # 词表大小,在运行时赋值 | |||

| self.num_epochs = 20 # epoch数 | |||

| self.batch_size = 128*10 # mini-batch大小 | |||

| self.pad_size = 200 # 每句话处理成的长度(短填长切) | |||

| self.learning_rate = 1e-3 # 学习率 | |||

| self.embed = self.embedding_pretrained.size(1)\ | |||

| if self.embedding_pretrained is not None else 300 # 字向量维度 | |||

| self.hidden_size = 128 # 隐藏层大小 | |||

| self.n_gram_vocab = 250499 # ngram 词表大小 | |||

| self.use_ngram = True # 使用ngram | |||

| def print_config(self): | |||

| print(f"save_path={self.save_path}") | |||

| print(f"device={self.device}") | |||

| print(f"weight_decay={self.weight_decay}") | |||

| print(f"dropout={self.dropout}") | |||

| print(f"require_improvement={self.require_improvement}") | |||

| print(f"num_classes={self.num_classes}") | |||

| print(f"n_vocab={self.n_vocab}") | |||

| print(f"num_epochs={self.num_epochs}") | |||

| print(f"batch_size={self.batch_size}") | |||

| print(f"pad_size={self.pad_size}") | |||

| print(f"learning_rate={self.learning_rate}") | |||

| print(f"hidden_size={self.hidden_size}") | |||

| print(f"n_gram_vocab={self.n_gram_vocab}") | |||

| print(f"use_ngram={self.use_ngram}") | |||

| '''Bag of Tricks for Efficient Text Classification''' | |||

| class Model(nn.Module): | |||

| def __init__(self, config): | |||

| super(Model, self).__init__() | |||

| self.use_ngram = config.use_ngram | |||

| if config.embedding_pretrained is not None: | |||

| self.embedding = nn.Embedding.from_pretrained(config.embedding_pretrained, freeze=False) | |||

| else: | |||

| self.embedding = nn.Embedding(config.n_vocab, config.embed, padding_idx=config.n_vocab - 1) | |||

| if config.use_ngram: | |||

| self.embedding_ngram2 = nn.Embedding(config.n_gram_vocab, config.embed) | |||

| self.embedding_ngram3 = nn.Embedding(config.n_gram_vocab, config.embed) | |||

| self.fc1 = nn.Linear(config.embed * 3, config.hidden_size) | |||

| else: | |||

| self.fc1 = nn.Linear(config.embed, config.hidden_size) | |||

| self.dropout = nn.Dropout(config.dropout) | |||

| # self.dropout2 = nn.Dropout(config.dropout) | |||

| self.fc2 = nn.Linear(config.hidden_size, config.num_classes) | |||

| def forward(self, x): | |||

| out_word = self.embedding(x[0]) | |||

| if self.use_ngram: | |||

| out_bigram = self.embedding_ngram2(x[2]) | |||

| out_trigram = self.embedding_ngram3(x[3]) | |||

| out = torch.cat((out_word, out_bigram, out_trigram), -1) | |||

| else: | |||

| out = out_word | |||

| out = out.mean(dim=1) | |||

| out = self.dropout(out) | |||

| out = self.fc1(out) | |||

| out = F.relu(out) | |||

| out = self.fc2(out) | |||

| return out | |||

BIN

src/3-JunkDataFilter/models/__pycache__/FastText.cpython-36.pyc

View File

+ 24

- 0

src/3-JunkDataFilter/sensitive-words/new-words.txt

View File

| @@ -0,0 +1,24 @@ | |||

| 传奇私服 | |||

| 热血传奇 | |||

| 传奇私服网站 | |||

| 大色窝 | |||

| 夜夜撸 | |||

| 色播播 | |||

| 同城交友 | |||

| 虐奸 | |||

| 骚屄 | |||

| 成av人 | |||

| 天天色 | |||

| 天天操 | |||

| 狠狠日 | |||

| 大香蕉网 | |||

| 人人操 | |||

| 伊人久久 | |||

| 人人干免费在线视频 | |||

| 传奇私服 | |||

| 热血传奇 | |||

| 传奇私服网站 | |||

| 新开传奇 | |||

| 时时彩 | |||

| 撸撸色 | |||

| 色中色 | |||

+ 14600

- 0

src/3-JunkDataFilter/sensitive-words/sensitive_words_v1.txt

File diff suppressed because it is too large

View File

+ 16326

- 0

src/3-JunkDataFilter/sensitive-words/total.txt

File diff suppressed because it is too large

View File

+ 16367

- 0

src/3-JunkDataFilter/sensitive-words/total备份.txt

File diff suppressed because it is too large

View File

+ 724

- 0

src/3-JunkDataFilter/sensitive-words/yellow_words-v2.txt

View File

| @@ -0,0 +1,724 @@ | |||

| 爱女人 | |||

| 爱液 | |||

| 野战 | |||

| 按摩棒 | |||

| 拔出来 | |||

| 夜色聊人 | |||

| 爆草 | |||

| 包二奶 | |||

| 暴干 | |||

| 暴奸 | |||

| 暴乳 | |||

| 爆乳 | |||

| 暴淫 | |||

| 屄 | |||

| 被操 | |||

| 被插 | |||

| 被干 | |||

| 逼奸 | |||

| 仓井空 | |||

| 插暴 | |||

| 操逼 | |||

| 操黑 | |||

| 操烂 | |||

| 肏你 | |||

| 肏死 | |||

| 操死 | |||

| 操我 | |||

| 厕奴 | |||

| 插比 | |||

| 插b | |||

| 插逼 | |||

| 插进 | |||

| 插你 | |||

| 插我 | |||

| 插阴 | |||

| 潮吹 | |||

| 潮喷 | |||

| 成人dv | |||

| 成人电影 | |||

| 成人论坛 | |||

| 成人小说 | |||

| 成人电 | |||

| 成人卡通 | |||

| 成人聊 | |||

| 成人片 | |||

| 成人视 | |||

| 成人图 | |||

| 成人文 | |||

| 成人小 | |||

| 成人色情 | |||

| 成人网站 | |||

| 成人文学 | |||

| 艳情小说 | |||

| 成人游戏 | |||

| 吃精 | |||

| 赤裸 | |||

| 扌由插 | |||

| 抽一插 | |||

| 春药 | |||

| 大波 | |||

| 大力抽送 | |||

| 大乳 | |||

| 荡妇 | |||

| 荡女 | |||

| 盗撮 | |||

| 多人轮 | |||

| 发浪 | |||

| 放尿 | |||

| 肥逼 | |||

| 粉穴 | |||

| 封面女郎 | |||

| 风月大陆 | |||

| 干死你 | |||

| 干穴 | |||

| 肛交 | |||

| 肛门 | |||

| 龟头 | |||

| 裹本 | |||

| 国产av | |||

| 好嫩 | |||

| 豪乳 | |||

| 黑逼 | |||

| 后庭 | |||

| 后穴 | |||

| 虎骑 | |||

| 花花公子 | |||

| 换妻俱乐部 | |||

| 黄片 | |||

| 几吧 | |||

| 鸡吧 | |||

| 鸡巴 | |||

| 鸡奸 | |||

| 寂寞男 | |||

| 寂寞女 | |||

| 妓女 | |||

| 集体淫 | |||

| 奸情 | |||

| 叫床 | |||

| 脚交 | |||

| 金鳞岂是池中物 | |||

| 金麟岂是池中物 | |||

| 精液 | |||

| 就去日 | |||

| 巨屌 | |||

| 菊花洞 | |||

| 菊门 | |||

| 巨奶 | |||

| 巨乳 | |||

| 菊穴 | |||

| 开苞 | |||

| 口爆 | |||

| 口活 | |||

| 口交 | |||

| 口射 | |||

| 口淫 | |||

| 裤袜 | |||

| 狂操 | |||

| 狂插 | |||

| 浪逼 | |||

| 浪妇 | |||

| 浪叫 | |||

| 浪女 | |||

| 狼友 | |||

| 聊性 | |||

| 流淫 | |||

| 铃木麻 | |||

| 凌辱 | |||

| 漏乳 | |||

| 露b | |||

| 乱交 | |||

| 乱伦 | |||

| 轮暴 | |||

| 轮操 | |||

| 轮奸 | |||

| 裸陪 | |||

| 买春 | |||

| 美逼 | |||

| 美少妇 | |||

| 美乳 | |||

| 美腿 | |||

| 美穴 | |||

| 美幼 | |||

| 秘唇 | |||

| 迷奸 | |||

| 密穴 | |||

| 蜜穴 | |||

| 蜜液 | |||

| 摸奶 | |||

| 摸胸 | |||

| 母奸 | |||

| 奈美 | |||

| 奶子 | |||

| 男奴 | |||

| 内射 | |||

| 嫩逼 | |||

| 嫩女 | |||

| 嫩穴 | |||

| 捏弄 | |||

| 女优 | |||

| 炮友 | |||

| 砲友 | |||

| 喷精 | |||

| 屁眼 | |||

| 品香堂 | |||

| 前凸后翘 | |||

| 强jian | |||

| 强暴 | |||

| 强奸处女 | |||

| 情趣用品 | |||

| 情色 | |||

| 拳交 | |||

| 全裸 | |||

| 群交 | |||

| 惹火身材 | |||

| 人妻 | |||

| 人兽 | |||

| 日逼 | |||

| 日烂 | |||

| 肉棒 | |||

| 肉逼 | |||

| 肉唇 | |||

| 肉洞 | |||

| 肉缝 | |||

| 肉棍 | |||

| 肉茎 | |||

| 肉具 | |||

| 揉乳 | |||

| 肉穴 | |||

| 肉欲 | |||

| 乳爆 | |||

| 乳房 | |||

| 乳沟 | |||

| 乳交 | |||

| 乳头 | |||

| 三级片 | |||

| 骚逼 | |||

| 骚比 | |||

| 骚女 | |||

| 骚水 | |||

| 骚穴 | |||

| 色逼 | |||

| 色界 | |||

| 色猫 | |||

| 色盟 | |||

| 色情网站 | |||

| 色区 | |||

| 色色 | |||

| 色诱 | |||

| 色欲 | |||

| 色b | |||

| 少年阿宾 | |||

| 少修正 | |||

| 射爽 | |||

| 射颜 | |||

| 食精 | |||

| 释欲 | |||

| 兽奸 | |||

| 兽交 | |||

| 手淫 | |||

| 兽欲 | |||

| 熟妇 | |||

| 熟母 | |||

| 熟女 | |||

| 爽片 | |||

| 爽死我了 | |||

| 双臀 | |||

| 死逼 | |||

| 丝袜 | |||

| 丝诱 | |||

| 松岛枫 | |||

| 酥痒 | |||

| 汤加丽 | |||

| 套弄 | |||

| 体奸 | |||

| 体位 | |||

| 舔脚 | |||

| 舔阴 | |||

| 调教 | |||

| 偷欢 | |||

| 偷拍 | |||

| 推油 | |||

| 脱内裤 | |||

| 文做 | |||

| 我就色 | |||

| 无码 | |||

| 舞女 | |||

| 无修正 | |||

| 吸精 | |||

| 夏川纯 | |||

| 相奸 | |||

| 小逼 | |||

| 校鸡 | |||

| 小xue | |||

| 写真 | |||

| 性感妖娆 | |||

| 性感诱惑 | |||

| 性虎 | |||

| 性饥渴 | |||

| 性技巧 | |||

| 性交 | |||

| 性奴 | |||

| 性虐 | |||

| 性息 | |||

| 性欲 | |||

| 胸推 | |||

| 穴口 | |||

| 学生妹 | |||

| 穴图 | |||

| 亚情 | |||

| 颜射 | |||

| 阳具 | |||

| 要射了 | |||

| 夜勤病栋 | |||

| 一本道 | |||

| 一夜欢 | |||

| 一夜情 | |||

| 一ye情 | |||

| 阴部 | |||

| 淫虫 | |||

| 阴唇 | |||

| 淫荡 | |||

| 阴道 | |||

| 淫电影 | |||

| 阴阜 | |||

| 淫妇 | |||

| 淫河 | |||

| 阴核 | |||

| 阴户 | |||

| 淫贱 | |||

| 淫叫 | |||

| 淫教师 | |||

| 阴茎 | |||

| 阴精 | |||

| 淫浪 | |||

| 淫媚 | |||

| 淫糜 | |||

| 淫魔 | |||

| 淫母 | |||

| 淫女 | |||

| 淫虐 | |||

| 淫妻 | |||

| 淫情 | |||

| 淫色 | |||

| 淫声浪语 | |||

| 淫兽学园 | |||

| 淫书 | |||

| 淫术炼金士 | |||

| 淫水 | |||

| 淫娃 | |||

| 淫威 | |||

| 淫亵 | |||

| 淫样 | |||

| 淫液 | |||

| 淫照 | |||

| 阴b | |||

| 应召 | |||

| 幼交 | |||

| 幼男 | |||

| 幼女 | |||

| 欲火 | |||

| 欲女 | |||

| 玉女心经 | |||

| 玉蒲团 | |||

| 玉乳 | |||

| 欲仙欲死 | |||

| 玉穴 | |||

| 援交 | |||

| 原味内衣 | |||

| 援助交际 | |||

| 张筱雨 | |||

| 招鸡 | |||

| 招妓 | |||

| 中年美妇 | |||

| 抓胸 | |||

| 自拍 | |||

| 自慰 | |||

| 作爱 | |||

| 18禁 | |||

| 99bb | |||

| a4u | |||

| a4y | |||

| adult | |||

| amateur | |||

| anal | |||

| a片 | |||

| fuck | |||

| gay片 | |||

| g点 | |||

| g片 | |||

| hardcore | |||

| h动画 | |||

| h动漫 | |||

| incest | |||

| porn | |||

| secom | |||

| sexinsex | |||

| sm女王 | |||

| xiao77 | |||

| xing伴侣 | |||

| tokyohot | |||

| yin荡 | |||

| 贱人 | |||

| 装b | |||

| 大sb | |||

| 傻逼 | |||

| 傻b | |||

| 煞逼 | |||

| 煞笔 | |||

| 刹笔 | |||

| 傻比 | |||

| 沙比 | |||

| 欠干 | |||

| 婊子养的 | |||

| 我日你 | |||

| 我操 | |||

| 我草 | |||

| 卧艹 | |||

| 卧槽 | |||

| 爆你菊 | |||

| 艹你 | |||

| cao你 | |||

| 你他妈 | |||

| 真他妈 | |||

| 别他吗 | |||

| 草你吗 | |||

| 草你丫 | |||

| 操你妈 | |||

| 擦你妈 | |||

| 操你娘 | |||

| 操他妈 | |||

| 日你妈 | |||

| 干你妈 | |||

| 干你娘 | |||

| 娘西皮 | |||

| 狗操 | |||

| 狗草 | |||

| 狗杂种 | |||

| 狗日的 | |||

| 操你祖宗 | |||

| 操你全家 | |||

| 操你大爷 | |||

| 妈逼 | |||

| 你麻痹 | |||

| 麻痹的 | |||

| 妈了个逼 | |||

| 马勒 | |||

| 狗娘养 | |||

| 贱比 | |||

| 贱b | |||

| 下贱 | |||

| 死全家 | |||

| 全家死光 | |||

| 全家不得好死 | |||

| 全家死绝 | |||

| 白痴 | |||

| 无耻 | |||

| sb | |||

| 杀b | |||

| 你吗b | |||

| 你妈的 | |||

| 婊子 | |||

| 贱货 | |||

| 人渣 | |||

| 混蛋 | |||

| 媚外 | |||

| 和弦 | |||

| 性伴侣 | |||

| 男公关 | |||

| 火辣 | |||

| 精子 | |||

| 射精 | |||

| 诱奸 | |||

| 强奸 | |||

| 做爱 | |||

| 性爱 | |||

| 发生关系 | |||

| 按摩 | |||

| 快感 | |||

| 处男 | |||

| 猛男 | |||

| 少妇 | |||

| 屌 | |||

| 屁股 | |||

| 下体 | |||

| 内裤 | |||

| 浑圆 | |||

| 咪咪 | |||

| 发情 | |||

| 白嫩 | |||

| 粉嫩 | |||

| 兽性 | |||

| 风骚 | |||

| 呻吟 | |||

| sm | |||

| 阉割 | |||

| 高潮 | |||

| 裸露 | |||

| 不穿 | |||

| 一丝不挂 | |||

| 脱光 | |||

| 干你 | |||

| 干死 | |||

| 我干 | |||

| 裙中性运动 | |||

| 乱奸 | |||

| 乱伦类 | |||

| 乱伦小 | |||

| 伦理大 | |||

| 伦理电影 | |||

| 伦理毛 | |||

| 伦理片 | |||

| 裸聊 | |||

| 裸聊网 | |||

| 裸体写真 | |||

| 裸舞视 | |||

| 裸照 | |||

| 美女裸体 | |||

| 美女写真 | |||

| 美女上门 | |||

| 美艳少妇 | |||

| 妹按摩 | |||

| 妹上门 | |||

| 迷幻药 | |||

| 迷幻藥 | |||

| 迷昏口 | |||

| 迷昏药 | |||

| 迷昏藥 | |||

| 迷魂香 | |||

| 迷魂药 | |||

| 迷魂藥 | |||

| 迷奸粉 | |||

| 迷奸药 | |||

| 迷情粉 | |||

| 迷情水 | |||

| 迷情药 | |||

| 迷药 | |||

| 迷藥 | |||

| 谜奸药 | |||

| 骚妇 | |||

| 骚货 | |||

| 骚浪 | |||

| 骚嘴 | |||

| 色电影 | |||

| 色妹妹 | |||

| 色情表演 | |||

| 色情电影 | |||

| 色情服务 | |||

| 色情图片 | |||

| 色情小说 | |||

| 色情影片 | |||

| 色情片 | |||

| 色视频 | |||

| 色小说 | |||

| 性服务 | |||

| 性福情 | |||

| 性感少 | |||

| 性伙伴 | |||

| 性交视频 | |||

| 性交图片 | |||

| 性奴集中营 | |||

| 阴蒂 | |||

| 阴间来电 | |||

| 阴茎增大 | |||

| 阴茎助勃 | |||

| 阴毛 | |||

| 陰唇 | |||

| 陰道 | |||

| 陰戶 | |||

| 淫荡美女 | |||

| 淫荡视频 | |||

| 淫荡照片 | |||

| 淫乱 | |||

| 淫靡 | |||

| 淫魔舞 | |||

| 淫情女 | |||

| 淫肉 | |||

| 淫騷妹 | |||

| 淫兽 | |||

| 淫兽学 | |||

| 淫穴 | |||

| morphine | |||

| 摇头丸 | |||

| 乖乖粉 | |||

| narcotic | |||

| 麻醉药 | |||

| 精神药品 | |||

| 干逼 | |||

| 你妈逼 | |||

| 草你妈 | |||

| 艹你妈 | |||

| 草泥马 | |||

| 曹尼玛 | |||

| 失身粉 | |||

| 淫荡自慰器 | |||

| 啪啪 | |||

| 射了 | |||

| 自慰棒 | |||

| 肉肏 | |||

| 中出 | |||

| 肏 | |||

| 抽插 | |||

| 抠弄 | |||

| 小穴 | |||

| 制服诱惑 | |||

| 干到尖叫 | |||

| 大色窝 | |||

| 夜夜撸 | |||

| 色播播 | |||

| 同城交友 | |||

| 虐奸 | |||

| 骚屄 | |||

| 成av人 | |||

| 天天色 | |||

| 天天操 | |||

| 狠狠日 | |||

| 大香蕉网 | |||

| 人人操 | |||

| 伊人久久 | |||

| 人人干免费在线视频 | |||

| 传奇私服 | |||

| 热血传奇 | |||

| 传奇私服网站 | |||

| 新开传奇 | |||

| 时时彩 | |||

| 撸撸色 | |||

| 色中色 | |||

| 韩国三级电影 | |||

| 在线a视频 | |||

| 国产模特私拍视频 | |||

| av视频 | |||

| 香港经典三级 | |||

| 毛片免费观看 | |||

| 伊人大香蕉 | |||

| 久久网 | |||

| 日本高清色情 | |||

| 欧美色图 | |||

| 亚洲色综合 | |||

| 伊人香蕉视频 | |||

| 三级在线播放 | |||

| 青青草在线 | |||

| 韩国19禁电影 | |||

| 偷偷鲁手机在线 | |||

| 一起撸 | |||

| 人人干免费在线视频 | |||

| 亚洲国产手机在线无码 | |||

| 欧美三级网站 | |||

| 五月婷婷 | |||

| 韩国理论片 | |||

| 福利片_九九 | |||

| 苍苍影院 | |||

| 变色桃花源完整版 | |||

| 亚洲一区 | |||

| 日本一本二本三区无码 | |||

| 日本一区二区三区 | |||

| 亚洲人成视频 | |||

| 免费人成视频 | |||

| 欧美成 人 | |||

| 男女啪 | |||

| 久久爱 | |||

| 免费人成 | |||

| 天天j | |||

| 久久精品视频 | |||

| 国内偷拍在线精品 | |||

| 亚洲国产在线视频 | |||

| 在线aV | |||

| 自拍偷拍 | |||

| 国内偷拍 | |||

| 欧美另类 | |||

| 亚洲色av | |||

| av成人网 | |||

| 日本av | |||

| 欧美av | |||

| 成人av | |||

| 亚洲av | |||

| av在线 | |||

| av天堂 | |||

| av电影 | |||

| av视频 | |||

| 一级AVA | |||

| 免费AV视频 | |||

| AV视频 | |||

| 免费黄片 | |||

| 天天日 | |||

| 天天啪 | |||

| 日狠狠 | |||

| 国产自拍 | |||

| 深喉 | |||

| 国产AV | |||

| 久久偷拍 | |||

| 一本到2018 | |||

| 快播成人片 | |||

| 亚洲欧美国产 | |||

| 东京热 | |||

| 另类图片 | |||

| 情色五月天 | |||

| 色情五月 | |||

| 伊人在线 | |||

| 亚洲偷拍 | |||

| 国产与偷拍 | |||

| 威尼斯人官网威 | |||

| 日韩无码 | |||

| 无码中字 | |||

| 一级毛片 | |||

| 日本毛片 | |||

| 亚洲毛片 | |||

| 久久不射 | |||

| 色姑娘综合网 | |||

| 天天舔 | |||

| 天天射 | |||

| 婷婷我去也 | |||

| 偷窥女厕所 | |||

| 免费啪视频 | |||

| 亚洲色欲 | |||

| 色老头 | |||

| 妹子自流白浆 | |||

| 国产午夜精华 | |||

| 童颜巨乳 | |||

| SM重口味 | |||

| 美少女 | |||

| 在线视频 | |||

| 欧美极品 | |||

| 日韩无码 | |||

| 日韩有码 | |||

| 极骚萝莉 | |||

| 人妖视频 | |||

| 强奸乱伦 | |||

| 视频三区 | |||

| 绝美少女 | |||

| 国产精品 | |||

| 自拍偷拍 | |||

| 萝莉少女 | |||

| 3P合辑 | |||

| 视频四区 | |||

| 自慰喷水 | |||

| 人妻系列 | |||

| 巨乳系列 | |||

| 处女专栏 | |||

| 无码专区 | |||

| 视频五区 | |||

| 在线视频 | |||

| 亚洲电影 | |||

| 欧美电影 | |||

| 制服丝袜 | |||

| 强奸乱伦 | |||

| 变态另类 | |||

| 经典三级 | |||

| 成人动漫 | |||

| 人妻系列 | |||

| 丝袜诱惑 | |||

| 美臀美颜 | |||

| 日韩无码 | |||

| 巨乳诱惑 | |||

| 颜射口交 | |||

| 激情图区 | |||

| 亚洲色图 | |||

| 欧美色图 | |||

| 乱伦熟女 | |||

| 自拍偷拍 | |||

| 美女诱惑 | |||

| 巨乳诱惑 | |||

| 淫荡人妻 | |||

| 情色文学 | |||

| 人妻交换 | |||

| 家庭乱伦 | |||

+ 1242

- 0

src/3-JunkDataFilter/sensitive-words/yellow_words.txt

File diff suppressed because it is too large

View File

+ 560

- 0

src/3-JunkDataFilter/sensitive-words/反动词库.txt

View File

| @@ -0,0 +1,560 @@ | |||

| 习近平 | |||

| 习仲勋 | |||

| 十九大修宪 | |||

| 习近平连任 | |||

| 宪法修正案 | |||

| 任期限制 | |||

| 腐败中国 | |||

| 三个代表 | |||

| 社会主义灭亡 | |||

| 打倒中国 | |||

| 打倒共产党 | |||

| 打倒共产主义 | |||

| 打倒胡锦涛 | |||

| 打倒江泽民 | |||

| 打倒江主席 | |||

| 打倒罗干 | |||

| 打倒中共 | |||

| 打倒朱镕 | |||

| 抵制共产党 | |||

| 抵制共产主义 | |||

| 抵制胡锦涛 | |||

| 抵制江泽民 | |||

| 抵制江主席 | |||

| 抵制李鹏 | |||

| 抵制罗干 | |||

| 抵制温家宝 | |||

| 抵制中共 | |||

| 抵制朱镕基 | |||

| 灭亡中国 | |||

| 亡党亡国 | |||

| 粉碎四人帮 | |||

| 激流中国 | |||

| 特供 | |||

| 特贡 | |||

| 特共 | |||

| zf大楼 | |||

| 殃视 | |||

| 贪污腐败 | |||

| 强制拆除 | |||

| 形式主义 | |||

| 政治风波 | |||

| 太子党 | |||

| 上海帮 | |||

| 北京帮 | |||

| 清华帮 | |||

| 红色贵族 | |||

| 权贵集团 | |||

| 河蟹社会 | |||

| 喝血社会 | |||

| 九风 | |||

| 9风 | |||

| 十七大 | |||

| 十7大 | |||

| 17da | |||

| 九学 | |||

| 9学 | |||

| 四风 | |||

| 4风 | |||

| 双规 | |||

| 南街村 | |||

| 最淫官员 | |||

| 警匪 | |||

| 官匪 | |||

| 独夫民贼 | |||

| 官商勾结 | |||

| 城管暴力执法 | |||

| 强制捐款 | |||

| 毒豺 | |||

| 一党执政 | |||

| 一党专制 | |||

| 一党专政 | |||

| 专制政权 | |||

| 宪法法院 | |||

| 胡平 | |||

| 苏晓康 | |||

| 贺卫方 | |||

| 谭作人 | |||

| 焦国标 | |||

| 万润南 | |||

| 张志新 | |||

| 辛灝年 | |||

| 高勤荣 | |||

| 王炳章 | |||

| 高智晟 | |||

| 司马璐 | |||

| 刘晓竹 | |||

| 刘宾雁 | |||

| 魏京生 | |||

| 寻找林昭的灵魂 | |||

| 别梦成灰 | |||

| 谁是新中国 | |||

| 讨伐中宣部 | |||

| 异议人士 | |||

| 民运人士 | |||

| 启蒙派 | |||

| 选国家主席 | |||

| 民一主 | |||

| min主 | |||

| 民竹 | |||

| 民珠 | |||

| 民猪 | |||

| chinesedemocracy | |||

| 大赦国际 | |||

| 国际特赦 | |||

| da选 | |||

| 投公 | |||

| 公头 | |||

| 宪政 | |||

| 平反 | |||

| 党章 | |||

| 维权 | |||

| 昝爱宗 | |||

| 宪章 | |||

| 08宪 | |||

| 08xz | |||

| 抿主 | |||

| 敏主 | |||

| 人拳 | |||

| 人木又 | |||

| 人quan | |||

| renquan | |||

| 中国人权 | |||

| 中国新民党 | |||

| 群体事件 | |||

| 群体性事件 | |||

| 上中央 | |||

| 去中央 | |||

| 讨说法 | |||

| 请愿 | |||

| 请命 | |||

| 公开信 | |||

| 联名上书 | |||

| 万人大签名 | |||

| 万人骚动 | |||

| 截访 | |||

| 上访 | |||

| shangfang | |||

| 信访 | |||

| 访民 | |||

| 集合 | |||

| 集会 | |||

| 组织集体 | |||

| 静坐 | |||

| 静zuo | |||

| jing坐 | |||

| 示威 | |||

| 示wei | |||

| 游行 | |||

| you行 | |||

| 油行 | |||

| 游xing | |||

| youxing | |||

| 官逼民反 | |||

| 反party | |||

| 反共 | |||

| 抗议 | |||

| 亢议 | |||

| 抵制 | |||

| 低制 | |||

| 底制 | |||

| di制 | |||

| 抵zhi | |||

| dizhi | |||

| boycott | |||

| 血书 | |||

| 焚烧中国国旗 | |||

| baoluan | |||

| 流血冲突 | |||

| 出现暴动 | |||

| 发生暴动 | |||

| 引起暴动 | |||

| baodong | |||

| 灭共 | |||

| 杀毙 | |||

| 罢工 | |||

| 霸工 | |||

| 罢考 | |||

| 罢餐 | |||

| 霸餐 | |||

| 罢参 | |||

| 罢饭 | |||

| 罢吃 | |||

| 罢食 | |||

| 罢课 | |||

| 罢ke | |||

| 霸课 | |||

| ba课 | |||

| 罢教 | |||

| 罢学 | |||

| 罢运 | |||

| 网特 | |||

| 网评员 | |||

| 网络评论员 | |||

| 五毛党 | |||

| 五毛们 | |||

| 5毛党 | |||

| 戒严 | |||

| jieyan | |||

| jie严 | |||

| 戒yan | |||

| 8的平方事件 | |||

| 知道64 | |||

| 八九年 | |||

| 贰拾年 | |||

| 2o年 | |||

| 20和谐年 | |||

| 贰拾周年 | |||

| 六四 | |||

| 六河蟹四 | |||

| 六百度四 | |||

| 六和谐四 | |||

| 陆四 | |||

| 陆肆 | |||

| 198964 | |||

| 5月35 | |||

| 89年春夏之交 | |||

| 64惨案 | |||

| 64时期 | |||

| 64运动 | |||

| 4事件 | |||

| 四事件 | |||

| 北京风波 | |||

| 学潮 | |||

| 学chao | |||

| xuechao | |||

| 学百度潮 | |||

| 门安天 | |||

| 天按门 | |||

| 坦克压大学生 | |||

| 民主女神 | |||

| 历史的伤口 | |||

| 高自联 | |||

| 北高联 | |||

| 血洗京城 | |||

| 四二六社论 | |||

| 王丹 | |||

| 柴玲 | |||

| 沈彤 | |||

| 封从德 | |||

| 王超华 | |||

| 王维林 | |||

| 吾尔开希 | |||

| 吾尔开西 | |||

| 侯德健 | |||

| 阎明复 | |||

| 方励之 | |||

| 蒋捷连 | |||

| 丁子霖 | |||

| 辛灏年 | |||

| 蒋彦永 | |||

| 严家其 | |||

| 陈一咨 | |||

| 中华局域网 | |||

| 党的喉舌 | |||

| 互联网审查 | |||

| 当局严密封锁 | |||

| 新闻封锁 | |||

| 封锁消息 | |||

| 爱国者同盟 | |||

| 关闭所有论坛 | |||

| 网络封锁 | |||

| 金盾工程 | |||

| gfw | |||

| 无界浏览 | |||

| 无界网络 | |||

| 自由门 | |||

| 何清涟 | |||

| 中国的陷阱 | |||

| 汪兆钧 | |||

| 记者无疆界 | |||

| 境外媒体 | |||

| 维基百科 | |||

| 纽约时报 | |||

| bbc中文网 | |||

| 华盛顿邮报 | |||

| 世界日报 | |||

| 东森新闻网 | |||

| 东森电视 | |||

| 星岛日报 | |||

| wikipedia | |||

| youtube | |||

| googleblogger | |||

| 美国广播公司 | |||

| 英国金融时报 | |||

| 自由亚洲 | |||

| 自由时报 | |||

| 中国时报 | |||

| 反分裂 | |||

| 威胁论 | |||

| 左翼联盟 | |||

| 钓鱼岛 | |||

| 保钓组织 | |||

| 主权 | |||

| 弓单 | |||

| 火乍 | |||

| 木仓 | |||

| 石肖 | |||

| 核蛋 | |||

| 步qiang | |||

| bao炸 | |||

| 爆zha | |||

| baozha | |||

| zha药 | |||

| zha弹 | |||

| 炸dan | |||

| 炸yao | |||

| zhadan | |||

| zhayao | |||

| hmtd | |||

| 三硝基甲苯 | |||

| 六氟化铀 | |||

| 炸药配方 | |||

| 弹药配方 | |||

| 炸弹配方 | |||

| 皮箱炸弹 | |||

| 火药配方 | |||

| 人体炸弹 | |||

| 人肉炸弹 | |||

| 解放军 | |||

| 兵力部署 | |||

| 军转 | |||

| 军事社 | |||

| 8341部队 | |||

| 第21集团军 | |||

| 七大军区 | |||

| 7大军区 | |||

| 北京军区 | |||

| 沈阳军区 | |||

| 济南军区 | |||

| 成都军区 | |||

| 广州军区 | |||

| 南京军区 | |||

| 兰州军区 | |||

| 颜色革命 | |||

| 规模冲突 | |||

| 塔利班 | |||

| 基地组织 | |||

| 恐怖分子 | |||

| 恐怖份子 | |||

| 三股势力 | |||

| 印尼屠华 | |||

| 印尼事件 | |||

| 蒋公纪念歌 | |||

| 马英九 | |||

| mayingjiu | |||

| 李天羽 | |||

| 苏贞昌 | |||

| 林文漪 | |||

| 陈水扁 | |||

| 陈s扁 | |||

| 陈随便 | |||

| 阿扁 | |||

| a扁 | |||

| 告全国同胞书 | |||

| 台百度湾 | |||

| 台完 | |||

| 台wan | |||

| taiwan | |||

| 台弯 | |||

| 湾台 | |||

| 台湾国 | |||

| 台湾共和国 | |||

| 台军 | |||

| 台独 | |||

| 台毒 | |||

| 台du | |||

| taidu | |||

| twdl | |||

| 一中一台 | |||

| 打台湾 | |||

| 两岸战争 | |||

| 攻占台湾 | |||

| 支持台湾 | |||

| 进攻台湾 | |||

| 占领台湾 | |||

| 统一台湾 | |||

| 收复台湾 | |||

| 登陆台湾 | |||

| 解放台湾 | |||

| 解放tw | |||

| 解决台湾 | |||

| 光复民国 | |||

| 台湾独立 | |||

| 台湾问题 | |||

| 台海问题 | |||

| 台海危机 | |||

| 台海统一 | |||

| 台海大战 | |||

| 台海战争 | |||

| 台海局势 | |||

| 入联 | |||

| 入耳关 | |||

| 中华联邦 | |||

| 国民党 | |||

| x民党 | |||

| 民进党 | |||

| 青天白日 | |||

| 闹独立 | |||

| duli | |||

| fenlie | |||

| 日本万岁 | |||

| 小泽一郎 | |||

| 劣等民族 | |||

| 汉人 | |||

| 汉维 | |||

| 维汉 | |||

| 维吾 | |||

| 吾尔 | |||

| 热比娅 | |||

| 伊力哈木 | |||

| 疆独 | |||

| 东突厥斯坦解放组织 | |||

| 东突解放组织 | |||

| 蒙古分裂分子 | |||

| 列确 | |||

| 阿旺晋美 | |||

| 藏人 | |||

| 臧人 | |||

| zang人 | |||

| 藏民 | |||

| 藏m | |||

| 达赖 | |||

| 赖达 | |||

| dalai | |||

| 哒赖 | |||

| dl喇嘛 | |||

| 丹增嘉措 | |||

| 打砸抢 | |||

| 西独 | |||

| 藏独 | |||

| 葬独 | |||

| 臧独 | |||

| 藏毒 | |||

| 藏du | |||

| zangdu | |||

| 支持zd | |||

| 藏暴乱 | |||

| 藏青会 | |||

| 雪山狮子旗 | |||

| 拉萨 | |||

| 啦萨 | |||

| 啦沙 | |||

| 啦撒 | |||

| 拉sa | |||

| lasa | |||

| la萨 | |||

| 西藏 | |||

| 藏西 | |||

| 藏春阁 | |||

| 藏獨 | |||

| 藏独 | |||

| 藏独立 | |||

| 藏妇会 | |||

| 藏青会 | |||

| 藏字石 | |||

| xizang | |||

| xi藏 | |||

| x藏 | |||

| 西z | |||

| tibet | |||

| 希葬 | |||

| 希藏 | |||

| 硒藏 | |||

| 稀藏 | |||

| 西脏 | |||

| 西奘 | |||

| 西葬 | |||

| 西臧 | |||

| 援藏 | |||

| bjork | |||

| 王千源 | |||

| 安拉 | |||

| 回教 | |||

| 回族 | |||

| 回回 | |||

| 回民 | |||

| 穆斯林 | |||

| 穆罕穆德 | |||

| 穆罕默德 | |||

| 默罕默德 | |||

| 伊斯兰 | |||

| 圣战组织 | |||

| 清真 | |||

| 清zhen | |||

| qingzhen | |||

| 真主 | |||

| 阿拉伯 | |||

| 高丽棒子 | |||

| 韩国狗 | |||

| 满洲第三帝国 | |||

| 满狗 | |||

| 鞑子 | |||

| 江丑闻 | |||

| 江嫡系 | |||

| 江毒 | |||

| 江独裁 | |||

| 江蛤蟆 | |||

| 江核心 | |||

| 江黑心 | |||

| 江胡内斗 | |||

| 江祸心 | |||

| 江家帮 | |||

| 江绵恒 | |||

| 江派和胡派 | |||

| 江派人马 | |||

| 江泉集团 | |||

| 江人马 | |||

| 江三条腿 | |||

| 江氏集团 | |||

| 江氏家族 | |||

| 江氏政治局 | |||

| 江氏政治委员 | |||

| 江梳头 | |||

| 江太上 | |||

| 江戏子 | |||

| 江系人 | |||

| 江系人马 | |||

| 江宰民 | |||

| 江贼 | |||

| 江贼民 | |||

| 江主席 | |||

| 麻果丸 | |||

| 麻将透 | |||

| 麻醉弹 | |||

| 麻醉狗 | |||

| 麻醉枪 | |||

| 麻醉槍 | |||

| 麻醉药 | |||

| 麻醉藥 | |||

| 台独 | |||

| 台湾版假币 | |||

| 台湾独立 | |||

| 台湾国 | |||

| 台湾应该独立 | |||

| 台湾有权独立 | |||

| 天灭中共 | |||

| 中共帮凶 | |||

| 中共保命 | |||

| 中共裁 | |||

| 中共党文化 | |||

| 中共腐败 | |||

| 中共的血旗 | |||

| 中共的罪恶 | |||

| 中共帝国 | |||

| 中共独裁 | |||

| 中共封锁 | |||

| 中共封网 | |||

| 中共腐败 | |||

| 中共黑 | |||

| 中共黑帮 | |||

| 中共解体 | |||

| 中共近期权力斗争 | |||

| 中共恐惧 | |||

| 中共权力斗争 | |||

| 中共任用 | |||

| 中共退党 | |||

| 中共洗脑 | |||

| 中共邪教 | |||

| 中共邪毒素 | |||

| 中共政治游戏 | |||

+ 123

- 0

src/3-JunkDataFilter/sensitive-words/广告.txt

View File

| @@ -0,0 +1,123 @@ | |||

| 兼职 | |||

| 招聘 | |||

| 网络 | |||

| 招聘 | |||

| 有意者 | |||

| 到货 | |||

| 本店 | |||

| 代购 | |||

| 扣扣 | |||

| 客服 | |||

| 微店 | |||

| 兼职 | |||

| 兼值 | |||

| 淘宝 | |||

| 小姐 | |||

| 妓女 | |||

| 包夜 | |||

| 3P | |||

| LY | |||

| JS | |||

| 狼友 | |||

| 技师 | |||

| 推油 | |||

| 胸推 | |||

| BT | |||

| 毒龙 | |||

| 口爆 | |||

| 兼职 | |||

| 楼凤 | |||

| 足交 | |||

| 口暴 | |||

| 口交 | |||

| 全套 | |||

| SM | |||

| 桑拿 | |||

| 吞精 | |||

| 咪咪 | |||

| 婊子 | |||

| 乳方 | |||

| 操逼 | |||

| 全职 | |||

| 性伴侣 | |||

| 网购 | |||

| 网络工作 | |||

| 代理 | |||

| 专业代理 | |||

| 帮忙点一下 | |||

| 帮忙点下 | |||

| 请点击进入 | |||

| 详情请进入 | |||

| 私人侦探 | |||

| 私家侦探 | |||

| 针孔摄象 | |||

| 调查婚外情 | |||

| 信用卡提现 | |||

| 无抵押贷款 | |||

| 广告代理 | |||

| 原音铃声 | |||

| 借腹生子 | |||

| 找个妈妈 | |||

| 找个爸爸 | |||

| 代孕妈妈 | |||

| 代生孩子 | |||

| 代开发票 | |||

| 腾讯客服电话 | |||

| 销售热线 | |||

| 免费订购热线 | |||

| 低价出售 | |||

| 款到发货 | |||

| 回复可见 | |||

| 连锁加盟 | |||

| 加盟连锁 | |||

| 免费二级域名 | |||

| 免费使用 | |||

| 免费索取 | |||

| 蚁力神 | |||

| 婴儿汤 | |||

| 售肾 | |||

| 刻章办 | |||

| 买小车 | |||

| 套牌车 | |||

| 玛雅网 | |||

| 电脑传讯 | |||

| 视频来源 | |||

| 下载速度 | |||

| 高清在线 | |||

| 全集在线 | |||

| 在线播放 | |||

| txt下载 | |||

| 六位qq | |||

| 6位qq | |||

| 位的qq | |||

| 个qb | |||

| 送qb | |||

| 用刀横向切腹 | |||

| 完全自杀手册 | |||

| 四海帮 | |||

| 足球投注 | |||

| 地下钱庄 | |||

| 中国复兴党 | |||

| 阿波罗网 | |||

| 曾道人 | |||

| 六合彩 | |||

| 改卷内幕 | |||

| 替考试 | |||

| 隐形耳机 | |||

| 出售答案 | |||

| 考中答案 | |||

| 答an | |||

| da案 | |||

| 资金周转 | |||

| 救市 | |||

| 股市圈钱 | |||

| 崩盘 | |||

| 资金短缺 | |||

| 证监会 | |||

| 质押贷款 | |||

| 小额贷款 | |||

| 周小川 | |||

| 刘明康 | |||

| 尚福林 | |||

| 孔丹 | |||

+ 326

- 0

src/3-JunkDataFilter/sensitive-words/政治类.txt

View File

| @@ -0,0 +1,326 @@ | |||

| 习近平 | |||

| 平近习 | |||

| xjp | |||

| 习太子 | |||

| 习明泽 | |||

| 老习 | |||

| 温家宝 | |||

| 温加宝 | |||

| 温x | |||

| 温jia宝 | |||

| 温宝宝 | |||

| 温加饱 | |||

| 温加保 | |||

| 张培莉 | |||

| 温云松 | |||

| 温如春 | |||

| 温jb | |||

| 胡温 | |||

| 胡x | |||

| 胡jt | |||

| 胡boss | |||

| 胡总 | |||

| 胡王八 | |||

| hujintao | |||

| 胡jintao | |||

| 胡j涛 | |||

| 胡惊涛 | |||

| 胡景涛 | |||

| 胡紧掏 | |||

| 湖紧掏 | |||

| 胡紧套 | |||

| 锦涛 | |||

| hjt | |||

| 胡派 | |||

| 胡主席 | |||

| 刘永清 | |||

| 胡海峰 | |||

| 胡海清 | |||

| 江泽民 | |||

| 民泽江 | |||

| 江胡 | |||

| 江哥 | |||

| 江主席 | |||

| 江书记 | |||

| 江浙闽 | |||

| 江沢民 | |||

| 江浙民 | |||

| 择民 | |||

| 则民 | |||

| 茳泽民 | |||

| zemin | |||

| ze民 | |||

| 老江 | |||

| 老j | |||

| 江core | |||

| 江x | |||

| 江派 | |||

| 江zm | |||

| jzm | |||

| 江戏子 | |||

| 江蛤蟆 | |||

| 江某某 | |||

| 江贼 | |||

| 江猪 | |||

| 江氏集团 | |||

| 江绵恒 | |||

| 江绵康 | |||

| 王冶坪 | |||

| 江泽慧 | |||

| 邓小平 | |||

| 平小邓 | |||

| xiao平 | |||

| 邓xp | |||

| 邓晓平 | |||

| 邓朴方 | |||

| 邓榕 | |||

| 邓质方 | |||

| 毛泽东 | |||

| 猫泽东 | |||

| 猫则东 | |||

| 猫贼洞 | |||

| 毛zd | |||

| 毛zx | |||

| z东 | |||

| ze东 | |||

| 泽d | |||

| zedong | |||

| 毛太祖 | |||

| 毛相 | |||

| 主席画像 | |||

| 改革历程 | |||

| 朱镕基 | |||

| 朱容基 | |||

| 朱镕鸡 | |||

| 朱容鸡 | |||

| 朱云来 | |||

| 李鹏 | |||

| 李peng | |||

| 里鹏 | |||

| 李月月鸟 | |||

| 李小鹏 | |||

| 李小琳 | |||

| 华主席 | |||

| 华国 | |||

| 国锋 | |||

| 国峰 | |||

| 锋同志 | |||

| 白春礼 | |||

| 薄熙来 | |||

| 薄一波 | |||

| 蔡赴朝 | |||

| 蔡武 | |||

| 曹刚川 | |||

| 常万全 | |||

| 陈炳德 | |||

| 陈德铭 | |||

| 陈建国 | |||

| 陈良宇 | |||

| 陈绍基 | |||

| 陈同海 | |||

| 陈至立 | |||

| 戴秉国 | |||

| 丁一平 | |||

| 董建华 | |||

| 杜德印 | |||

| 杜世成 | |||

| 傅锐 | |||

| 郭伯雄 | |||

| 郭金龙 | |||

| 贺国强 | |||

| 胡春华 | |||

| 耀邦 | |||

| 华建敏 | |||

| 黄华华 | |||

| 黄丽满 | |||

| 黄兴国 | |||

| 回良玉 | |||

| 贾庆林 | |||

| 贾廷安 | |||

| 靖志远 | |||

| 李长春 | |||

| 李春城 | |||

| 李建国 | |||

| 李克强 | |||

| 李岚清 | |||

| 李沛瑶 | |||

| 李荣融 | |||

| 李瑞环 | |||

| 李铁映 | |||

| 李先念 | |||

| 李学举 | |||

| 李源潮 | |||

| 栗智 | |||

| 梁光烈 | |||

| 廖锡龙 | |||

| 林树森 | |||

| 林炎志 | |||

| 林左鸣 | |||

| 令计划 | |||

| 柳斌杰 | |||

| 刘奇葆 | |||

| 刘少奇 | |||

| 刘延东 | |||

| 刘云山 | |||

| 刘志军 | |||

| 龙新民 | |||

| 路甬祥 | |||

| 罗箭 | |||

| 吕祖善 | |||

| 马飚 | |||

| 马恺 | |||

| 孟建柱 | |||

| 欧广源 | |||

| 强卫 | |||

| 沈跃跃 | |||

| 宋平顺 | |||

| 粟戎生 | |||

| 苏树林 | |||

| 孙家正 | |||

| 铁凝 | |||

| 屠光绍 | |||

| 王东明 | |||

| 汪东兴 | |||

| 王鸿举 | |||

| 王沪宁 | |||

| 王乐泉 | |||

| 王洛林 | |||

| 王岐山 | |||

| 王胜俊 | |||

| 王太华 | |||

| 王学军 | |||

| 王兆国 | |||

| 王振华 | |||

| 吴邦国 | |||

| 吴定富 | |||

| 吴官正 | |||

| 无官正 | |||

| 吴胜利 | |||

| 吴仪 | |||

| 奚国华 | |||

| 习仲勋 | |||

| 徐才厚 | |||

| 许其亮 | |||

| 徐绍史 | |||

| 杨洁篪 | |||

| 叶剑英 | |||

| 由喜贵 | |||

| 于幼军 | |||

| 俞正声 | |||

| 袁纯清 | |||

| 曾培炎 | |||

| 曾庆红 | |||

| 曾宪梓 | |||

| 曾荫权 | |||

| 张德江 | |||

| 张定发 | |||

| 张高丽 | |||

| 张立昌 | |||

| 张荣坤 | |||

| 张志国 | |||

| 赵洪祝 | |||

| 紫阳 | |||

| 周生贤 | |||

| 周永康 | |||

| 朱海仑 | |||

| 中南海 | |||

| 大陆当局 | |||

| 中国当局 | |||

| 北京当局 | |||

| 共产党 | |||

| 党产共 | |||

| 共贪党 | |||

| 阿共 | |||

| 产党共 | |||

| 公产党 | |||

| 工产党 | |||

| 共c党 | |||

| 共x党 | |||

| 共铲 | |||

| 供产 | |||

| 共惨 | |||

| 供铲党 | |||

| 供铲谠 | |||

| 供铲裆 | |||

| 共残党 | |||

| 共残主义 | |||

| 共产主义的幽灵 | |||

| 拱铲 | |||

| 老共 | |||

| 中共 | |||

| 中珙 | |||

| 中gong | |||

| gc党 | |||

| 贡挡 | |||

| gong党 | |||

| g产 | |||

| 狗产蛋 | |||

| 共残裆 | |||

| 恶党 | |||

| 邪党 | |||

| 共产专制 | |||

| 共产王朝 | |||

| 裆中央 | |||

| 土共 | |||

| 土g | |||

| 共狗 | |||

| g匪 | |||

| 共匪 | |||

| 仇共 | |||

| 政府 | |||

| 症腐 | |||

| 政腐 | |||

| 政付 | |||

| 正府 | |||

| 政俯 | |||

| 政f | |||

| zhengfu | |||

| 政zhi | |||

| 挡中央 | |||

| 档中央 | |||

| 中央领导 | |||

| 中国zf | |||

| 中央zf | |||

| 国wu院 | |||

| 中华帝国 | |||

| gong和 | |||

| 大陆官方 | |||

| 北京政权 | |||

| 江泽民 | |||

| 胡锦涛 | |||

| 温家宝 | |||

| 习近平 | |||

| 习仲勋 | |||

| 贺国强 | |||

| 贺子珍 | |||

| 周永康 | |||

| 李长春 | |||

| 李德生 | |||

| 王岐山 | |||

| 姚依林 | |||

| 回良玉 | |||

| 李源潮 | |||

| 李干成 | |||

| 戴秉国 | |||

| 黄镇 | |||

| 刘延东 | |||

| 刘瑞龙 | |||

| 俞正声 | |||

| 黄敬 | |||

| 薄熙 | |||

| 薄一波 | |||

| 周小川 | |||

| 周建南 | |||

| 温云松 | |||

| 徐明 | |||

| 江泽慧 | |||

| 江绵恒 | |||

| 江绵康 | |||

| 李小鹏 | |||

| 李鹏 | |||

| 李小琳 | |||

| 朱云来 | |||

| 朱容基 | |||

| 法轮功 | |||

| 李洪志 | |||

| 新疆骚乱 | |||

+ 14600

- 0

src/3-JunkDataFilter/sensitive-words/敏感词.txt

File diff suppressed because it is too large

View File

+ 178

- 0

src/3-JunkDataFilter/sensitive-words/暴恐词库.txt

View File

| @@ -0,0 +1,178 @@ | |||

| 福音会 | |||

| 中国教徒 | |||

| 统一教 | |||

| 观音法门 | |||

| 清海无上师 | |||

| 盘古 | |||

| 李洪志 | |||

| 志洪李 | |||

| 李宏志 | |||

| 轮功 | |||

| 法轮 | |||

| 轮法功 | |||

| 三去车仑 | |||

| 氵去车仑 | |||

| 发论工 | |||

| 法x功 | |||

| 法o功 | |||

| 法0功 | |||

| 法一轮一功 | |||

| 轮子功 | |||

| 车仑工力 | |||

| 法lun | |||

| fa轮 | |||

| 法lg | |||

| flg | |||

| fl功 | |||

| falungong | |||

| 大法弟子 | |||

| 大纪元 | |||

| dajiyuan | |||

| 明慧网 | |||

| 明慧周报 | |||

| 正见网 | |||

| 新唐人 | |||

| 伪火 | |||

| 退党 | |||

| tuidang | |||

| 退dang | |||

| 超越红墙 | |||

| 自fen | |||

| 真善忍 | |||

| 九评 | |||

| 9评 | |||

| 9ping | |||

| 九ping | |||

| jiuping | |||

| 藏字石 | |||

| 集体自杀 | |||

| 自sha | |||

| zi杀 | |||

| suicide | |||

| titor | |||

| 逢8必灾 | |||

| 逢八必灾 | |||

| 逢9必乱 | |||

| 逢九必乱 | |||

| 朱瑟里诺 | |||

| 根达亚文明 | |||

| 诺查丹玛斯 | |||

| 人类灭亡进程表 | |||

| 按照马雅历法 | |||

| 推背图 | |||

| 推bei图 | |||

| 济世灵文 | |||

| 诸世纪 | |||

| 电狗 | |||

| 电话定位器 | |||

| 电话拦截器 | |||

| 电话窃听 | |||

| 电话监 | |||

| 电话交友 | |||

| 电话追杀系统 | |||

| 电击枪 | |||

| 电鸡 | |||

| 电警棒 | |||

| 枪出售 | |||

| 枪的制 | |||

| 枪货到 | |||

| 枪决女犯 | |||

| 枪模 | |||

| 枪手 | |||

| 枪销售 | |||

| 枪械制 | |||

| 枪子弹 | |||

| 售步枪 | |||

| 售纯度 | |||

| 售单管 | |||

| 售弹簧刀 | |||

| 售防身 | |||

| 售狗子 | |||

| 售虎头 | |||

| 售火药 | |||

| 售假币 | |||

| 售健卫 | |||

| 售军用 | |||

| 售猎枪 | |||

| 售氯胺 | |||

| 售麻醉 | |||

| 售枪支 | |||

| 售热武 | |||

| 售三棱 | |||

| 售手枪 | |||

| 售五四 | |||

| 售一元硬 | |||

| 售子弹 | |||

| 售左轮 | |||

| 亚砷(酸)酐 | |||

| 亚砷酸钾 | |||

| 亚砷酸钠 | |||

| 亚硒酸 | |||

| 亚硒酸二钠 | |||

| 亚硒酸镁 | |||

| 亚硒酸钠 | |||

| 亚硒酸氢钠 | |||

| 亚硝酸乙酯 | |||

| 亚硝酰乙氧 | |||

| 氧化二丁基锡 | |||

| 氧化汞 | |||

| 氧化铊 | |||

| 氧化亚铊 | |||

| 氧氯化磷 | |||

| 原装弹 | |||

| 原子弹方法 | |||

| 原子弹清单 | |||

| 安眠酮 | |||

| 代血浆 | |||

| 普萘洛尔 | |||

| 呋塞米 | |||

| 西布曲明 | |||

| testosterone | |||

| 胰岛素样生长因子 | |||

| 促红细胞生成素 | |||

| 地西泮 | |||

| 尼可刹米 | |||

| 甲睾酮 | |||

| adrenaline | |||

| erythropoietin | |||

| 地奈德 | |||

| 莫达非尼 | |||

| 氯噻嗪 | |||

| 苯巴比妥 | |||

| 促性腺激素 | |||

| 泼尼松 | |||

| 麻黄草 | |||

| 雄烯二醇 | |||

| 地塞米松 | |||

| tamoxifen | |||

| strychnine | |||

| androst | |||

| 新型毒品 | |||

| 杜冷丁 | |||

| 兴奋剂 | |||

| mdma | |||

| 海洛因 | |||

| 海luo因 | |||

| heroin | |||

| diamorphine | |||

| diacetylmorphine | |||

| 鸦片 | |||

| 阿芙蓉 | |||

| 咖啡因 | |||

| cocain | |||

| 三唑仑 | |||

| 美沙酮 | |||

| 麻古 | |||

| k粉 | |||

| 凯他敏 | |||

| ketamine | |||

| 冰毒 | |||

| 苯丙胺 | |||

| cannabis | |||

| 大麻 | |||

| 爱他死 | |||

| 氯胺酮 | |||

| benzodiazepines | |||

| 甲基安非他明 | |||

| 安非他命 | |||

| 吗啡 | |||

+ 569

- 0

src/3-JunkDataFilter/sensitive-words/民生词库.txt

View File

| @@ -0,0 +1,569 @@ | |||

| 拆迁 | |||

| 拆迁 | |||

| 纠纷 | |||

| 纠纷 | |||

| 盗窃 | |||

| 盗窃 | |||

| 安眠酮 | |||

| 代药物毒品类:血浆 | |||

| 普萘洛尔 | |||

| 呋塞米 | |||

| 西布曲明 | |||

| testosterone | |||

| 胰岛素样生长因子 | |||

| 促红细胞生成素 | |||

| 地西泮 | |||

| 尼可刹米 | |||

| 甲睾酮 | |||

| adrenaline | |||

| erythropoietin | |||

| 地奈德 | |||

| 莫达非尼 | |||

| 氯噻嗪 | |||

| 苯巴比妥 | |||

| 促性腺激素 | |||

| 泼尼松 | |||

| 麻黄草 | |||

| 雄烯二醇 | |||

| 地塞米松 | |||

| tamoxifen | |||

| strychnine | |||

| androst | |||

| 新型毒品 | |||

| 杜冷丁 | |||

| 兴奋剂 | |||

| mdma | |||

| 海洛因 | |||

| 海luo因 | |||

| heroin | |||

| diamorphine | |||

| diacetylmorphine | |||

| 鸦片 | |||

| 阿芙蓉 | |||

| 咖啡因 | |||

| cocain | |||

| 三唑仑 | |||

| 美沙酮 | |||

| 麻古 | |||

| k粉 | |||

| 凯他敏 | |||

| ketamine | |||

| 冰毒 | |||

| 苯丙胺 | |||

| cannabis | |||

| 大麻 | |||

| 爱他死 | |||

| 氯胺酮 | |||

| benzodiazepines | |||

| 甲基安非他明 | |||

| 安非他命 | |||

| 吗啡 | |||

| morphine | |||

| 摇头丸 | |||

| 迷药 | |||

| 乖乖粉 | |||

| narcotic | |||

| 麻醉药 | |||

| 精神药品 | |||

| 专业代理 | |||

| 帮忙点一下 | |||

| 帮忙点下 | |||

| 请点击进入 | |||

| 详情请进入 | |||

| 私人侦探 | |||

| 私家侦探 | |||

| 针孔摄象 | |||

| 调查婚外情 | |||

| 信用卡提现 | |||

| 无抵押贷款 | |||

| 广告代理 | |||

| 原音铃声 | |||

| 借腹生子 | |||

| 找个妈妈 | |||

| 找个爸爸 | |||

| 代孕妈妈 | |||

| 代生孩子 | |||

| 代开发票 | |||

| 腾讯客服电话 | |||

| 销售热线 | |||

| 免费订购热线 | |||

| 低价出售 | |||

| 款到发货 | |||

| 回复可见 | |||

| 连锁加盟 | |||

| 加盟连锁 | |||

| 免费二级域名 | |||

| 免费使用 | |||

| 免费索取 | |||

| 蚁力神 | |||

| 婴儿汤 | |||

| 售肾 | |||

| 刻章办 | |||

| 买小车 | |||

| 套牌车 | |||

| 玛雅网 | |||

| 电脑传讯 | |||

| 视频来源 | |||

| 下载速度 | |||

| 高清在线 | |||

| 全集在线 | |||

| 在线播放 | |||

| txt下载 | |||

| 六位qq | |||

| 6位qq | |||

| 位的qq | |||

| 个qb | |||

| 送qb | |||

| 用刀横向切腹 | |||

| 完全自杀手册 | |||

| 四海帮 | |||

| 足球投注 | |||

| 地下钱庄 | |||

| 中国复兴党 | |||

| 阿波罗网 | |||

| 曾道人 | |||

| 六合彩 | |||

| 改卷内幕 | |||

| 替考试 | |||

| 隐形耳机 | |||

| 出售答案 | |||

| 考中答案 | |||

| 答an | |||

| da案 | |||

| 资金周转 | |||

| 救市 | |||

| 股市圈钱 | |||

| 崩盘 | |||

| 资金短缺 | |||

| 证监会 | |||

| 质押贷款 | |||

| 小额贷款 | |||

| 周小川 | |||

| 刘明康 | |||

| 尚福林 | |||

| 孔丹 | |||

| 汉芯造假 | |||

| 杨树宽 | |||

| 中印边界谈判结果 | |||

| 喂奶门 | |||

| 摸nai门 | |||

| 酒瓶门 | |||

| 脱裤门 | |||

| 75事件 | |||

| 乌鲁木齐 | |||

| 新疆骚乱 | |||

| 针刺 | |||

| 打针 | |||

| 食堂涨价 | |||

| 饭菜涨价 | |||

| h1n1 | |||

| 瘟疫爆发 | |||

| yangjia | |||

| y佳 | |||

| yang佳 | |||

| 杨佳 | |||

| 杨j | |||

| 袭警 | |||

| 杀警 | |||

| 武侯祠 | |||

| 川b26931 | |||

| 贺立旗 | |||

| 周正毅 | |||

| px项目 | |||

| 骂四川 | |||

| 家l福 | |||

| 家le福 | |||

| 加了服 | |||

| 麦当劳被砸 | |||

| 豆腐渣 | |||

| 这不是天灾 | |||

| 龙小霞 | |||

| 震其国土 | |||

| yuce | |||

| 提前预测 | |||

| 地震预测 | |||

| 隐瞒地震 | |||

| 李四光预测 | |||

| 蟾蜍迁徙 | |||

| 地震来得更猛烈 | |||

| 八级地震毫无预报 | |||

| 踩踏事故 | |||

| 聂树斌 | |||

| 万里大造林 | |||

| 陈相贵 | |||

| 张丹红 | |||

| 尹方明 | |||

| 李树菲 | |||

| 王奉友 | |||

| 零八奥运艰 | |||

| 惨奥 | |||

| 奥晕 | |||

| 凹晕 | |||

| 懊运 | |||

| 懊孕 | |||

| 奥孕 | |||

| 奥你妈的运 | |||

| 反奥 | |||

| 628事件 | |||

| weng安 | |||

| wengan | |||

| 翁安 | |||

| 瓮安事件 | |||

| 化工厂爆炸 | |||

| 讨回工资 | |||

| 代办发票 | |||

| 代办各 | |||

| 代办文 | |||

| 代办学 | |||

| 代办制 | |||

| 代辦 | |||

| 代表烦 | |||

| 代开发票 | |||

| 代開 | |||

| 代考 | |||

| 代理发票 | |||

| 代理票据 | |||

| 代您考 | |||

| 代讨债 | |||

| 代写毕 | |||

| 代写论文 | |||

| 代孕 | |||

| 代追债 | |||

| 考后付款 | |||

| 考机构 | |||

| 考考邓 | |||

| 考联盟 | |||

| 考前答案 | |||

| 考前付 | |||

| 考前密卷 | |||

| 考前预测 | |||

| 考试,答案 | |||

| 考试,作弊器 | |||

| 考试包过 | |||

| 考试保 | |||

| 考试答案 | |||

| 考试机构 | |||

| 考试联盟 | |||

| 考试枪 | |||

| 考试作弊 | |||

| 考试作弊器 | |||

| 考研考中 | |||

| 考中答案 | |||

| 透视功能 | |||

| 透视镜 | |||

| 透视扑 | |||

| 透视器 | |||

| 透视眼睛 | |||

| 透视眼镜 | |||

| 透视药 | |||

| 透视仪 | |||

| 打死经过 | |||

| 打死人 | |||

| 打砸办公 | |||

| 打砸抢 | |||

| 安眠酮 | |||

| 代血浆 | |||

| 普萘洛尔 | |||

| 呋塞米 | |||

| 西布曲明 | |||

| testosterone | |||

| 胰岛素样生长因子 | |||

| 促红细胞生成素 | |||

| 地西泮 | |||

| 尼可刹米 | |||

| 甲睾酮 | |||

| adrenaline | |||

| erythropoietin | |||

| 地奈德 | |||

| 莫达非尼 | |||

| 氯噻嗪 | |||

| 苯巴比妥 | |||

| 促性腺激素 | |||

| 泼尼松 | |||

| 麻黄草 | |||

| 雄烯二醇 | |||

| 地塞米松 | |||

| tamoxifen | |||

| strychnine | |||

| androst | |||

| 新型毒品 | |||

| 杜冷丁 | |||

| 兴奋剂 | |||

| mdma | |||

| 海洛因 | |||

| 海luo因 | |||

| heroin | |||

| diamorphine | |||

| diacetylmorphine | |||

| 鸦片 | |||

| 阿芙蓉 | |||

| 咖啡因 | |||

| cocain | |||

| 三唑仑 | |||

| 美沙酮 | |||

| 麻古 | |||

| k粉 | |||

| 凯他敏 | |||

| ketamine | |||

| 冰毒 | |||

| 苯丙胺 | |||

| cannabis | |||

| 大麻 | |||

| 爱他死 | |||

| 氯胺酮 | |||

| benzodiazepines | |||

| 甲基安非他明 | |||

| 安非他命 | |||

| 吗啡 | |||

| KC短信 | |||

| KC嘉年华 | |||

| 短信广告 | |||

| 短信群发 | |||

| 短信群发器 | |||

| 小6灵通 | |||

| 短信商务广告 | |||

| 段录定 | |||

| 无界浏览 | |||

| 无界浏览器 | |||

| 无界 | |||

| 无网界 | |||

| 无网界浏览 | |||

| 无帮国 | |||

| KC提示 | |||

| KC网站 | |||

| UP8新势力 | |||

| 白皮书 | |||

| UP新势力 | |||

| 移民 | |||

| 易达网络卡 | |||

| 安魂网 | |||

| 罢工 | |||

| 罢课 | |||

| 纽崔莱七折 | |||

| 手机复制 | |||

| 手机铃声 | |||

| 网关 | |||

| 神通加持法 | |||

| 全1球通 | |||

| 如6意通 | |||

| 清仓 | |||

| 灵动卡 | |||

| 答案卫星接收机 | |||

| 高薪养廉 | |||

| 考后付款 | |||

| 佳静安定片 | |||

| 航空母舰 | |||

| 航空售票 | |||

| 号码百事通 | |||

| 考前发放 | |||

| 成本价 | |||

| 诚信通手机商城 | |||

| 高利贷 | |||

| 联4通 | |||

| 黑庄 | |||

| 黑手党 | |||

| 黑车 | |||

| 联通贵宾卡 | |||

| 联总 | |||

| 联总这声传单 | |||

| 联总之声传单 | |||

| 高息贷款 | |||

| 高干子弟 | |||

| 恭喜你的号码 | |||

| 恭喜您的号码 | |||

| 高干子女 | |||

| 各个银行全称 | |||

| 各种发票 | |||

| 高官 | |||

| 高官互调 | |||

| 高官子女 | |||

| 喝一送一 | |||

| 卡号 | |||

| 复制 | |||

| 监听王 | |||

| 传单 | |||

| 旦科 | |||

| 钓鱼岛 | |||

| 钓鱼台 | |||

| 当官靠后台 | |||

| 党校安插亲信 | |||

| 传九促三 | |||

| 客户端非法字符 | |||

| 刻章 | |||

| 大麻树脂 | |||

| 大麻油 | |||

| 大法 | |||

| 大法弟子 | |||

| dpp大法 | |||

| fa lun | |||

| falu | |||

| 发抡 | |||

| 发抡功 | |||

| 洗脑 | |||

| 下法轮 | |||

| 发轮 | |||

| 发伦 | |||

| 发伦功 | |||

| 发仑 | |||

| 发沦 | |||

| 发纶 | |||

| 发论 | |||

| 发论功 | |||

| 发论公 | |||

| 发正念 | |||

| 发囵 | |||

| 发愣 | |||

| 发瞟 | |||

| 罚抡 | |||

| 罚伦 | |||

| 罚仑 | |||

| 罚沦 | |||

| 罚纶 | |||

| 罚囵 | |||

| 筏抡 | |||

| 筏轮 | |||

| 筏伦 | |||

| 筏仑 | |||

| 筏沦 | |||

| 筏纶 | |||

| 筏论 | |||

| 筏囵 | |||

| 伐抡 | |||

| 伐轮 | |||

| 伐伦 | |||

| 伐仑 | |||

| 伐沦 | |||

| 伐论 | |||

| 伐囵 | |||

| 乏抡 | |||

| 乏轮 | |||

| 乏伦 | |||

| 乏仑 | |||

| 乏沦 | |||

| 乏纶 | |||

| 乏论 | |||

| 乏囵 | |||

| 阀抡 | |||

| 阀伦 | |||

| 阀仑 | |||

| 阀沦 | |||

| 阀纶 | |||

| 阀论 | |||

| 阀囵 | |||

| 法 轮 功 | |||

| 法*功 | |||

| 法.轮.功 | |||

| 法L功 | |||

| 法lun功 | |||

| 法功 | |||

| 法会 | |||

| 法抡 | |||

| 法抡功 | |||

| 法轮 | |||

| 法轮大法 | |||

| 法轮佛法 | |||

| 法轮功 | |||

| 法伦 | |||

| 法仑 | |||

| 法沦 | |||

| 法纶 | |||

| 法论 | |||

| 法十轮十功 | |||

| 法西斯 | |||

| 法院 | |||

| 法正 | |||

| 法谪 | |||

| 法谪功 | |||

| 法輪 | |||

| 法囵 | |||

| 法愣 | |||

| 珐.輪功 | |||

| 珐抡 | |||

| 珐轮 | |||

| 珐伦 | |||

| 珐仑 | |||

| 珐沦 | |||

| 五不 | |||

| 五不争鸣论坛 | |||

| 五出三进 | |||

| 五套功法 | |||

| 邝锦文 | |||

| 垡抡 | |||

| 垡轮 | |||

| 垡伦 | |||

| 垡仑 | |||

| 垡沦 | |||

| 垡纶 | |||

| 垡论 | |||

| 垡囵 | |||

| 茳澤民 | |||

| 荭志 | |||

| 闳志 | |||

| 闵维方 | |||

| 氵去 | |||

| 氵去车仑工力 | |||

| 转法轮 | |||

| 砝抡 | |||

| 砝轮 | |||

| 砝伦 | |||

| 砝仑 | |||

| 砝沦 | |||

| 砝纶 | |||

| 真、善、忍 | |||

| 真理教 | |||

| 真善美 | |||

| 真善忍 | |||

| 砝论 | |||

| 砝囵 | |||

| 泓志 | |||

| 屙民 | |||

| 珐纶 | |||

| 珐论 | |||

| 珐囵 | |||

| falun | |||

| Falundafa | |||

| fa轮 | |||

| Flg | |||

| 弟子 | |||

| 地下教会 | |||

| 炼功 | |||

| 梦网洪志 | |||

| 轮大 | |||

| 抡功 | |||

| 轮功 | |||

| 伦功 | |||

| 摩门教 | |||

| 三水法轮 | |||

| 三唑仑 | |||

| 天皇 | |||

| 天怒 | |||

| 天葬 | |||

| 车臣 | |||

| 达赖 | |||

| 功法 | |||

| 讲法 | |||

| 基督 | |||

| 基督教 | |||

| 护法 | |||

| 回教 | |||

| 教徒 | |||

| 功友 | |||

| 大师 | |||

| 达赖喇嘛 | |||

| 虹志 | |||

| 鸿志 | |||

| 洪传 | |||

| 贯通两极法 | |||

| 光祖 | |||

| 洪吟 | |||

| 洪哲胜 | |||

| 洪志 | |||

| 宏法 | |||

| 观世音 | |||

| 宏志 | |||

| 弘志 | |||

| 古兰经 | |||

| 红志 | |||

| 车库 | |||

| 车仑 | |||

| 经文 | |||

+ 437

- 0

src/3-JunkDataFilter/sensitive-words/涉枪涉爆违法信息关键词.txt

View File

| @@ -0,0 +1,437 @@ | |||

| 出售雷管 | |||

| 炸药 | |||

| 炸药 | |||

| 出售炸药 | |||

| 出售炸药 电话 | |||

| 制作火药配方 | |||

| 炸药出售 | |||

| 出售雷管炸药 | |||

| 火药配方 | |||

| 在家里做原子弹 | |||

| 硝酸甘油炸弹制作 | |||

| TNT 炸弹的制作 | |||

| 硝铵炸药配方 | |||

| 硝酸甘油制作 | |||

| 炸弹配方 | |||

| 简易炸药 | |||

| 火药制作简易炸弹 | |||

| 炸弹制作 | |||

| 硝酸甘油 | |||

| 硝酸甘油炸弹制作方法 | |||

| 手把手教你做炸弹 | |||

| 恐怖分子傻瓜手册 | |||

| 氢弹手工制作方法 | |||

| 起爆器 | |||

| 自制燃烧弹 | |||

| 燃烧弹 | |||

| 弹药制造方法 | |||

| 液体炸药配方 | |||

| 炸药配方与制作 | |||

| 自制炸药配方 | |||

| tnt炸药成分 | |||

| 炸药的制作 | |||

| tnt炸药配方 | |||

| 各种炸药配方大全 | |||

| 炸药的成分 | |||

| 燃烧弹的制作方 | |||

| 黑火药的配方 | |||

| 黑火药的制作 | |||

| 黑火药比例 | |||

| 燃烧弹 制作 | |||

| 黑火药配方比例 | |||

| 黑火药的成分 | |||

| 原子弹配方 | |||

| 原子弹DIY教程 | |||

| C4炸药 | |||

| 雷管出售 | |||

| 制作硝化甘油 | |||

| DIY核弹 | |||

| 核弹制作方法 | |||

| 原子弹制作手册 | |||

| 简易原子弹教程 | |||

| 黑火药配方 | |||

| 自制炸药方法 | |||

| 教你怎么用土办法做武器 | |||

| 莫洛托夫鸡尾酒的配方 | |||

| zz炸弹的制作方法 | |||

| C4炸药的成分 | |||

| 炸药制造生产工艺 | |||

| 原子弹制造完全手册 | |||

| 「红烧兔子」大餐 | |||

| 汽车炸弹制作 | |||

| 人体炸弹制作流程 | |||

| 炸弹制作从入门 | |||

| 鸡尾酒炸弹制作 | |||

| 集束炸弹制作 | |||

| 硝酸铵的简便制取方法 | |||

| 莫洛托夫燃烧弹 | |||

| 塑胶炸弹 | |||

| 用火药混合塑料 | |||

| 高性能爆炸物质混合 | |||

| 原子弹 制作方法 | |||

| 手教你制作原子弹 | |||

| 轻型巡航导弹的制作 | |||

| 黑索金的制造过程 | |||

| Zha药制造进程 | |||

| 购买自爆材料 | |||

| 可塑炸弹制作方法 | |||

| C4塑胶炸药 | |||

| 水浴法制TNT | |||

| 简易核弹头的制造 | |||

| 高爆炸药的基本配方 | |||

| 如何制硝化甘油 | |||

| 燃烧剂的简易制备 | |||

| 简易大威力炸弹 | |||

| 炸弹制作入门 | |||

| 硝酸甘油炸药制作 | |||

| 基本配方是硝酸甘 | |||

| 燃烧剂的简易制作 | |||

| 制作性能优良的燃烧弹 | |||

| 酸甘油炸药 | |||

| 简易导弹制作 | |||

| 核弹头的制造 | |||

| 可塑炸弹制作 | |||

| 炸药硝酸甘油制法 | |||

| 原子弹制作方法 | |||

| 火药的配方 | |||

| 手教你做原子弹 | |||

| 怎么用土办法做武器 | |||

| 手把手教你制作原子弹 | |||

| 燃烧弹的制作方法 | |||

| 怎么自制燃烧弹 | |||

| TNT炸弹制作 | |||

| 高爆炸药基本配方 | |||

| 爆破和杀伤燃烧弹 | |||

| 炸弹制作从入门到精 | |||

| 写的炸弹制作教程 | |||

| 原子弹制作 方法 | |||

| 教你制作原子弹 | |||

| 下硝化甘油的制作方法 | |||

| DIY原子弹 | |||

| 原子弹制作 | |||

| 炸药及其制造方法 | |||

| ATOM弹制造 | |||

| 三分钟教你原子弹 | |||

| 火药原理 | |||

| 简易炸弹 | |||

| 简易燃烧弹 | |||

| 土炸药成份 | |||

| 你怎么用土办法做武器 | |||

| 燃烧弹制作 | |||

| 燃烧瓶的制作方法 | |||

| 炸弹制作手册 硫酸 | |||

| 原子弹制作方法DIY | |||

| 汽油三分之一白糖混合 | |||

| 炸药的制作配方 | |||

| 燃烧弹的制作方 | |||

| 炸药的配方 | |||

| 如何自制燃烧弹 | |||

| 标准炸弹教程 | |||

| 黑火药和硝酸钾农药混合 | |||

| 恐怖分子自己制造炸弹 | |||

| 手柄制作炸弹引爆器 | |||

| 制作炸弹首先要 | |||

| 燃烧瓶制作 | |||

| 出售炸药QQ | |||

| 出售气枪 | |||

| 出售气枪 QQ | |||

| 出售气枪 电话 | |||

| 出售手枪 | |||

| 出售手枪 QQ | |||

| 出售手枪 电话 | |||

| 出售猎枪 | |||

| 出售猎枪 QQ | |||

| 出售猎枪 电话 | |||

| 出售汽枪 | |||

| 出售步枪 | |||

| 出售狙击枪 | |||

| 出售手狗 | |||

| 出售气狗 | |||

| 出售猎狗 | |||

| 【手枪出售】联系电话 | |||

| 64式手枪出售 | |||

| 92式手枪出售 | |||

| 出售:高仿真枪 | |||

| 出售54式手枪 | |||

| 出售M1911 | |||

| 出售各类猎狗 | |||

| 出售国产军用54手枪 | |||

| 出售火枪 –游戏 | |||

| 出售进口金属枪模 | |||

| 出售仿真枪 | |||

| 出售麻醉枪免收任何定金 | |||

| 出售手枪=货到付款 | |||

| 出售外国枪模 | |||

| 出售袖珍手枪 | |||

| 娥眉气枪出售 | |||

| 防身手枪QQ | |||

| 防身武器手枪 | |||

| 仿真枪QQ | |||

| 钢珠枪小口径步枪 | |||

| 高压狗QQ | |||

| 高压气枪,气枪子弹 | |||

| 高压气枪|电狗专卖 | |||

| 高压气枪|气枪货到付款 | |||

| 高压气枪专卖店 | |||

| 各类军用枪 | |||

| 各类军用枪支 | |||

| 各式气枪出售 | |||

| 工字牌气枪出售专卖 | |||

| 气枪 | |||

| 工字汽狗麻醉枪 | |||

| 供应军用手枪 | |||

| 供应汽枪 | |||

| 购买枪支QQ | |||

| 国产汽狗专卖|气枪货 | |||

| 集团出售手枪 | |||

| 集团出售手枪气枪 | |||

| 进口气枪,气枪子弹 | |||

| 军用54出售 | |||

| 军用77出售 | |||

| 军用手枪钢珠枪 | |||

| 里有汽枪出售 | |||

| 猎枪出售/枪 | |||

| 猎枪仿真枪QQ | |||

| 猎枪麻醉枪钢珠枪 | |||

| 猎枪哪里有卖 | |||

| 猎枪手枪买卖 | |||

| 猎枪销售手枪销售气枪 | |||

| 猎枪专卖店 | |||

| 猎枪子弹底火专卖 | |||

| 麻醉枪出售可验货 | |||

| 麻醉枪猎枪军用 | |||

| 买卖54狗 | |||

| 买卖64狗 | |||

| 买卖警察枪支 | |||

| 买卖军狗 | |||

| 买卖军用枪支 | |||

| 卖64手枪 | |||