Compare commits

merge into: Huawei_Technology:master

Huawei_Technology:master

Huawei_Technology:r0.1

Huawei_Technology:r0.2

Huawei_Technology:r0.3

Huawei_Technology:r0.5

Huawei_Technology:r0.6

Huawei_Technology:r0.7

Huawei_Technology:r1.0

Huawei_Technology:r1.1

Huawei_Technology:r1.2

Huawei_Technology:revert-merge-208-master

pull from: Huawei_Technology:r0.1

Huawei_Technology:master

Huawei_Technology:r0.1

Huawei_Technology:r0.2

Huawei_Technology:r0.3

Huawei_Technology:r0.5

Huawei_Technology:r0.6

Huawei_Technology:r0.7

Huawei_Technology:r1.0

Huawei_Technology:r1.1

Huawei_Technology:r1.2

Huawei_Technology:revert-merge-208-master

100 changed files with 696 additions and 8671 deletions

Split View

Diff Options

-

+2 -3.gitee/PULL_REQUEST_TEMPLATE.md

-

+0 -19.github/ISSUE_TEMPLATE/RFC.md

-

+0 -43.github/ISSUE_TEMPLATE/bug-report.md

-

+0 -19.github/ISSUE_TEMPLATE/task-tracking.md

-

+0 -24.github/PULL_REQUEST_TEMPLATE.md

-

+1 -1.gitignore

-

+28 -87README.md

-

+0 -130README_CN.md

-

+5 -248RELEASE.md

-

BINdocs/adversarial_robustness_cn.png

-

BINdocs/adversarial_robustness_en.png

-

BINdocs/differential_privacy_architecture_cn.png

-

BINdocs/differential_privacy_architecture_en.png

-

BINdocs/fuzzer_architecture_cn.png

-

BINdocs/fuzzer_architecture_en.png

-

BINdocs/mindarmour_architecture.png

-

BINdocs/privacy_leakage_cn.png

-

BINdocs/privacy_leakage_en.png

-

+62 -0example/data_processing.py

-

+46 -0example/mnist_demo/README.md

-

+5 -4example/mnist_demo/lenet5_net.py

-

+19 -11example/mnist_demo/mnist_attack_cw.py

-

+20 -11example/mnist_demo/mnist_attack_deepfool.py

-

+27 -19example/mnist_demo/mnist_attack_fgsm.py

-

+24 -19example/mnist_demo/mnist_attack_genetic.py

-

+27 -19example/mnist_demo/mnist_attack_hsja.py

-

+22 -13example/mnist_demo/mnist_attack_jsma.py

-

+30 -21example/mnist_demo/mnist_attack_lbfgs.py

-

+24 -15example/mnist_demo/mnist_attack_nes.py

-

+29 -21example/mnist_demo/mnist_attack_pgd.py

-

+23 -14example/mnist_demo/mnist_attack_pointwise.py

-

+20 -15example/mnist_demo/mnist_attack_pso.py

-

+22 -14example/mnist_demo/mnist_attack_salt_and_pepper.py

-

+144 -0example/mnist_demo/mnist_defense_nad.py

-

+44 -46example/mnist_demo/mnist_evaluation.py

-

+26 -26example/mnist_demo/mnist_similarity_detector.py

-

+46 -23example/mnist_demo/mnist_train.py

-

+0 -38examples/README.md

-

+0 -16examples/__init__.py

-

+0 -24examples/ai_fuzzer/README.md

-

+0 -169examples/ai_fuzzer/fuzz_testing_and_model_enhense.py

-

+0 -85examples/ai_fuzzer/lenet5_mnist_coverage.py

-

+0 -107examples/ai_fuzzer/lenet5_mnist_fuzzing.py

-

+0 -116examples/common/dataset/data_processing.py

-

+0 -0examples/common/networks/__init__.py

-

+0 -0examples/common/networks/lenet5/__init__.py

-

+0 -14examples/common/networks/vgg/__init__.py

-

+0 -45examples/common/networks/vgg/config.py

-

+0 -39examples/common/networks/vgg/crossentropy.py

-

+0 -23examples/common/networks/vgg/linear_warmup.py

-

+0 -0examples/common/networks/vgg/utils/__init__.py

-

+0 -36examples/common/networks/vgg/utils/util.py

-

+0 -219examples/common/networks/vgg/utils/var_init.py

-

+0 -142examples/common/networks/vgg/vgg.py

-

+0 -40examples/common/networks/vgg/warmup_cosine_annealing_lr.py

-

+0 -84examples/common/networks/vgg/warmup_step_lr.py

-

+0 -0examples/community/__init__.py

-

+0 -40examples/model_security/README.md

-

+0 -0examples/model_security/__init__.py

-

+0 -0examples/model_security/model_attacks/__init__.py

-

+0 -0examples/model_security/model_attacks/black_box/__init__.py

-

+0 -47examples/model_security/model_attacks/cv/faster_rcnn/README.md

-

+0 -150examples/model_security/model_attacks/cv/faster_rcnn/coco_attack_genetic.py

-

+0 -135examples/model_security/model_attacks/cv/faster_rcnn/coco_attack_pgd.py

-

+0 -149examples/model_security/model_attacks/cv/faster_rcnn/coco_attack_pso.py

-

+0 -31examples/model_security/model_attacks/cv/faster_rcnn/src/FasterRcnn/__init__.py

-

+0 -84examples/model_security/model_attacks/cv/faster_rcnn/src/FasterRcnn/anchor_generator.py

-

+0 -166examples/model_security/model_attacks/cv/faster_rcnn/src/FasterRcnn/bbox_assign_sample.py

-

+0 -197examples/model_security/model_attacks/cv/faster_rcnn/src/FasterRcnn/bbox_assign_sample_stage2.py

-

+0 -428examples/model_security/model_attacks/cv/faster_rcnn/src/FasterRcnn/faster_rcnn_r50.py

-

+0 -114examples/model_security/model_attacks/cv/faster_rcnn/src/FasterRcnn/fpn_neck.py

-

+0 -201examples/model_security/model_attacks/cv/faster_rcnn/src/FasterRcnn/proposal_generator.py

-

+0 -173examples/model_security/model_attacks/cv/faster_rcnn/src/FasterRcnn/rcnn.py

-

+0 -250examples/model_security/model_attacks/cv/faster_rcnn/src/FasterRcnn/resnet50.py

-

+0 -181examples/model_security/model_attacks/cv/faster_rcnn/src/FasterRcnn/roi_align.py

-

+0 -315examples/model_security/model_attacks/cv/faster_rcnn/src/FasterRcnn/rpn.py

-

+0 -158examples/model_security/model_attacks/cv/faster_rcnn/src/config.py

-

+0 -505examples/model_security/model_attacks/cv/faster_rcnn/src/dataset.py

-

+0 -42examples/model_security/model_attacks/cv/faster_rcnn/src/lr_schedule.py

-

+0 -184examples/model_security/model_attacks/cv/faster_rcnn/src/network_define.py

-

+0 -227examples/model_security/model_attacks/cv/faster_rcnn/src/util.py

-

+0 -154examples/model_security/model_attacks/cv/yolov3_darknet53/coco_attack_deepfool.py

-

+0 -14examples/model_security/model_attacks/cv/yolov3_darknet53/src/__init__.py

-

+0 -68examples/model_security/model_attacks/cv/yolov3_darknet53/src/config.py

-

+0 -80examples/model_security/model_attacks/cv/yolov3_darknet53/src/convert_weight.py

-

+0 -212examples/model_security/model_attacks/cv/yolov3_darknet53/src/darknet.py

-

+0 -60examples/model_security/model_attacks/cv/yolov3_darknet53/src/distributed_sampler.py

-

+0 -204examples/model_security/model_attacks/cv/yolov3_darknet53/src/initializer.py

-

+0 -80examples/model_security/model_attacks/cv/yolov3_darknet53/src/logger.py

-

+0 -70examples/model_security/model_attacks/cv/yolov3_darknet53/src/loss.py

-

+0 -182examples/model_security/model_attacks/cv/yolov3_darknet53/src/lr_scheduler.py

-

+0 -595examples/model_security/model_attacks/cv/yolov3_darknet53/src/transforms.py

-

+0 -187examples/model_security/model_attacks/cv/yolov3_darknet53/src/util.py

-

+0 -441examples/model_security/model_attacks/cv/yolov3_darknet53/src/yolo.py

-

+0 -192examples/model_security/model_attacks/cv/yolov3_darknet53/src/yolo_dataset.py

-

+0 -0examples/model_security/model_attacks/white_box/__init__.py

-

+0 -111examples/model_security/model_attacks/white_box/mnist_attack_mdi2fgsm.py

-

+0 -0examples/model_security/model_defenses/__init__.py

-

+0 -116examples/model_security/model_defenses/mnist_defense_nad.py

-

+0 -66examples/privacy/README.md

+ 2

- 3

.gitee/PULL_REQUEST_TEMPLATE.md

View File

| @@ -11,7 +11,7 @@ If this is your first time, please read our contributor guidelines: https://gite | |||

| > /kind feature | |||

| **What does this PR do / why do we need it**: | |||

| **What this PR does / why we need it**: | |||

| **Which issue(s) this PR fixes**: | |||

| @@ -21,6 +21,5 @@ Usage: `Fixes #<issue number>`, or `Fixes (paste link of issue)`. | |||

| --> | |||

| Fixes # | |||

| **Special notes for your reviewers**: | |||

| **Special notes for your reviewer**: | |||

+ 0

- 19

.github/ISSUE_TEMPLATE/RFC.md

View File

| @@ -1,19 +0,0 @@ | |||

| --- | |||

| name: RFC | |||

| about: Use this template for the new feature or enhancement | |||

| labels: kind/feature or kind/enhancement | |||

| --- | |||

| ## Background | |||

| - Describe the status of the problem you wish to solve | |||

| - Attach the relevant issue if have | |||

| ## Introduction | |||

| - Describe the general solution, design and/or pseudo-code | |||

| ## Trail | |||

| | No. | Task Description | Related Issue(URL) | | |||

| | --- | ---------------- | ------------------ | | |||

| | 1 | | | | |||

| | 2 | | | | |||

+ 0

- 43

.github/ISSUE_TEMPLATE/bug-report.md

View File

| @@ -1,43 +0,0 @@ | |||

| --- | |||

| name: Bug Report | |||

| about: Use this template for reporting a bug | |||

| labels: kind/bug | |||

| --- | |||

| <!-- Thanks for sending an issue! Here are some tips for you: | |||

| If this is your first time, please read our contributor guidelines: https://github.com/mindspore-ai/mindspore/blob/master/CONTRIBUTING.md | |||

| --> | |||

| ## Environment | |||

| ### Hardware Environment(`Ascend`/`GPU`/`CPU`): | |||

| > Uncomment only one ` /device <>` line, hit enter to put that in a new line, and remove leading whitespaces from that line: | |||

| > | |||

| > `/device ascend`</br> | |||

| > `/device gpu`</br> | |||

| > `/device cpu`</br> | |||

| ### Software Environment: | |||

| - **MindSpore version (source or binary)**: | |||

| - **Python version (e.g., Python 3.7.5)**: | |||

| - **OS platform and distribution (e.g., Linux Ubuntu 16.04)**: | |||

| - **GCC/Compiler version (if compiled from source)**: | |||

| ## Describe the current behavior | |||

| ## Describe the expected behavior | |||

| ## Steps to reproduce the issue | |||

| 1. | |||

| 2. | |||

| 3. | |||

| ## Related log / screenshot | |||

| ## Special notes for this issue | |||

+ 0

- 19

.github/ISSUE_TEMPLATE/task-tracking.md

View File

| @@ -1,19 +0,0 @@ | |||

| --- | |||

| name: Task | |||

| about: Use this template for task tracking | |||

| labels: kind/task | |||

| --- | |||

| ## Task Description | |||

| ## Task Goal | |||

| ## Sub Task | |||

| | No. | Task Description | Issue ID | | |||

| | --- | ---------------- | -------- | | |||

| | 1 | | | | |||

| | 2 | | | | |||

+ 0

- 24

.github/PULL_REQUEST_TEMPLATE.md

View File

| @@ -1,24 +0,0 @@ | |||

| <!-- Thanks for sending a pull request! Here are some tips for you: | |||

| If this is your first time, please read our contributor guidelines: https://github.com/mindspore-ai/mindspore/blob/master/CONTRIBUTING.md | |||

| --> | |||

| **What type of PR is this?** | |||

| > Uncomment only one ` /kind <>` line, hit enter to put that in a new line, and remove leading whitespaces from that line: | |||

| > | |||

| > `/kind bug`</br> | |||

| > `/kind task`</br> | |||

| > `/kind feature`</br> | |||

| **What does this PR do / why do we need it**: | |||

| **Which issue(s) this PR fixes**: | |||

| <!-- | |||

| *Automatically closes linked issue when PR is merged. | |||

| Usage: `Fixes #<issue number>`, or `Fixes (paste link of issue)`. | |||

| --> | |||

| Fixes # | |||

| **Special notes for your reviewers**: | |||

+ 1

- 1

.gitignore

View File

| @@ -13,7 +13,7 @@ build/ | |||

| dist/ | |||

| local_script/ | |||

| example/dataset/ | |||

| example/mnist_demo/MNIST/ | |||

| example/mnist_demo/MNIST_unzip/ | |||

| example/mnist_demo/trained_ckpt_file/ | |||

| example/mnist_demo/model/ | |||

| example/cifar_demo/model/ | |||

+ 28

- 87

README.md

View File

| @@ -1,112 +1,53 @@ | |||

| # MindArmour | |||

| <!-- TOC --> | |||

| - [MindArmour](#mindarmour) | |||

| - [What is MindArmour](#what-is-mindarmour) | |||

| - [Adversarial Robustness Module](#adversarial-robustness-module) | |||

| - [Fuzz Testing Module](#fuzz-testing-module) | |||

| - [Privacy Protection and Evaluation Module](#privacy-protection-and-evaluation-module) | |||

| - [Differential Privacy Training Module](#differential-privacy-training-module) | |||

| - [Privacy Leakage Evaluation Module](#privacy-leakage-evaluation-module) | |||

| - [Starting](#starting) | |||

| - [System Environment Information Confirmation](#system-environment-information-confirmation) | |||

| - [Installation](#installation) | |||

| - [Installation by Source Code](#installation-by-source-code) | |||

| - [Installation by pip](#installation-by-pip) | |||

| - [Installation Verification](#installation-verification) | |||

| - [Docs](#docs) | |||

| - [Community](#community) | |||

| - [Contributing](#contributing) | |||

| - [Release Notes](#release-notes) | |||

| - [License](#license) | |||

| <!-- /TOC --> | |||

| [查看中文](./README_CN.md) | |||

| - [What is MindArmour](#what-is-mindarmour) | |||

| - [Setting up](#setting-up-mindarmour) | |||

| - [Docs](#docs) | |||

| - [Community](#community) | |||

| - [Contributing](#contributing) | |||

| - [Release Notes](#release-notes) | |||

| - [License](#license) | |||

| ## What is MindArmour | |||

| MindArmour focus on security and privacy of artificial intelligence. MindArmour can be used as a tool box for MindSpore users to enhance model security and trustworthiness and protect privacy data. MindArmour contains three module: Adversarial Robustness Module, Fuzz Testing Module, Privacy Protection and Evaluation Module. | |||

| A tool box for MindSpore users to enhance model security and trustworthiness. | |||

| ### Adversarial Robustness Module | |||

| MindArmour is designed for adversarial examples, including four submodule: adversarial examples generation, adversarial example detection, model defense and evaluation. The architecture is shown as follow: | |||

| Adversarial robustness module is designed for evaluating the robustness of the model against adversarial examples, and provides model enhancement methods to enhance the model's ability to resist the adversarial attack and improve the model's robustness. | |||

| This module includes four submodule: Adversarial Examples Generation, Adversarial Examples Detection, Model Defense and Evaluation. | |||

|  | |||

| The architecture is shown as follow: | |||

| ## Setting up MindArmour | |||

|  | |||

| ### Dependencies | |||

| ### Fuzz Testing Module | |||

| Fuzz Testing module is a security test for AI models. We introduce neuron coverage gain as a guide to fuzz testing according to the characteristics of neural networks. | |||

| Fuzz testing is guided to generate samples in the direction of increasing neuron coverage rate, so that the input can activate more neurons and neuron values have a wider distribution range to fully test neural networks and explore different types of model output results and wrong behaviors. | |||

| The architecture is shown as follow: | |||

|  | |||

| ### Privacy Protection and Evaluation Module | |||

| Privacy Protection and Evaluation Module includes two modules: Differential Privacy Training Module and Privacy Leakage Evaluation Module. | |||

| #### Differential Privacy Training Module | |||

| Differential Privacy Training Module implements the differential privacy optimizer. Currently, `SGD`, `Momentum` and `Adam` are supported. They are differential privacy optimizers based on the Gaussian mechanism. | |||

| This mechanism supports both non-adaptive and adaptive policy. Rényi differential privacy (RDP) and Zero-Concentrated differential privacy(ZCDP) are provided to monitor differential privacy budgets. | |||

| The architecture is shown as follow: | |||

|  | |||

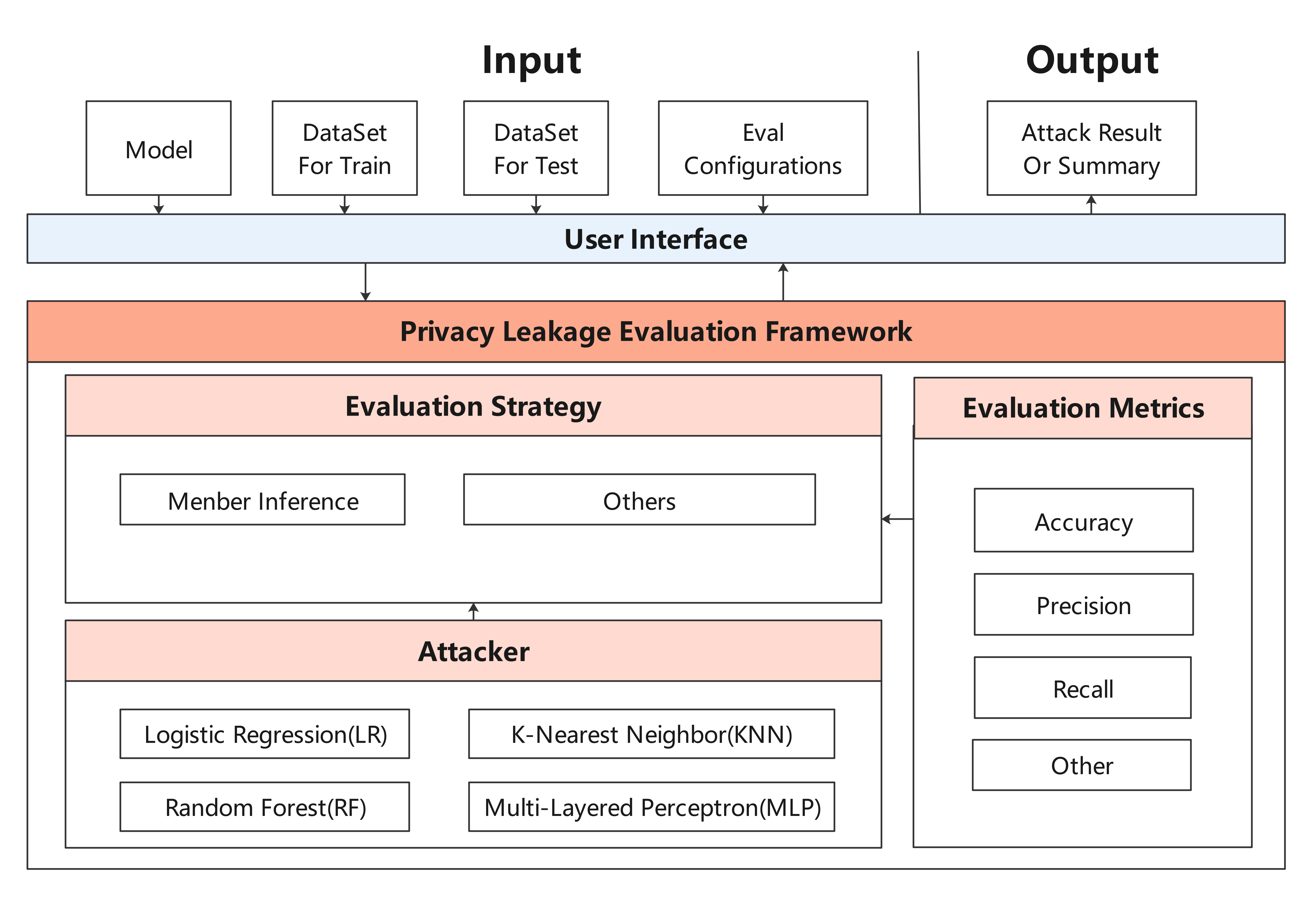

| #### Privacy Leakage Evaluation Module | |||

| Privacy Leakage Evaluation Module is used to assess the risk of a model revealing user privacy. The privacy data security of the deep learning model is evaluated by using membership inference method to infer whether the sample belongs to training dataset. | |||

| The architecture is shown as follow: | |||

|  | |||

| ## Starting | |||

| ### System Environment Information Confirmation | |||

| - The hardware platform should be Ascend, GPU or CPU. | |||

| - See our [MindSpore Installation Guide](https://www.mindspore.cn/install) to install MindSpore. | |||

| The versions of MindArmour and MindSpore must be consistent. | |||

| - All other dependencies are included in [setup.py](https://gitee.com/mindspore/mindarmour/blob/master/setup.py). | |||

| This library uses MindSpore to accelerate graph computations performed by many machine learning models. Therefore, installing MindSpore is a pre-requisite. All other dependencies are included in `setup.py`. | |||

| ### Installation | |||

| #### Installation by Source Code | |||

| #### Installation for development | |||

| 1. Download source code from Gitee. | |||

| ```bash | |||

| git clone https://gitee.com/mindspore/mindarmour.git | |||

| ``` | |||

| 2. Compile and install in MindArmour directory. | |||

| ```bash | |||

| cd mindarmour | |||

| python setup.py install | |||

| ``` | |||

| ```bash | |||

| git clone https://gitee.com/mindspore/mindarmour.git | |||

| ``` | |||

| #### Installation by pip | |||

| 2. Compile and install in MindArmour directory. | |||

| ```bash | |||

| pip install https://ms-release.obs.cn-north-4.myhuaweicloud.com/{version}/MindArmour/{arch}/mindarmour-{version}-cp37-cp37m-linux_{arch}.whl --trusted-host ms-release.obs.cn-north-4.myhuaweicloud.com -i https://pypi.tuna.tsinghua.edu.cn/simple | |||

| $ cd mindarmour | |||

| $ python setup.py install | |||

| ``` | |||

| > - When the network is connected, dependency items are automatically downloaded during .whl package installation. (For details about other dependency items, see [setup.py](https://gitee.com/mindspore/mindarmour/blob/master/setup.py)). In other cases, you need to manually install dependency items. | |||

| > - `{version}` denotes the version of MindArmour. For example, when you are downloading MindArmour 1.0.1, `{version}` should be 1.0.1. | |||

| > - `{arch}` denotes the system architecture. For example, the Linux system you are using is x86 architecture 64-bit, `{arch}` should be `x86_64`. If the system is ARM architecture 64-bit, then it should be `aarch64`. | |||

| #### `Pip` installation | |||

| 1. Download whl package from [MindSpore website](https://www.mindspore.cn/versions/en), then run the following command: | |||

| ### Installation Verification | |||

| ``` | |||

| pip install mindarmour-{version}-cp37-cp37m-linux_{arch}.whl | |||

| ``` | |||

| Successfully installed, if there is no error message such as `No module named 'mindarmour'` when execute the following command: | |||

| 2. Successfully installed, if there is no error message such as `No module named 'mindarmour'` when execute the following command: | |||

| ```bash | |||

| python -c 'import mindarmour' | |||

| @@ -118,7 +59,7 @@ Guidance on installation, tutorials, API, see our [User Documentation](https://g | |||

| ## Community | |||

| [MindSpore Slack](https://join.slack.com/t/mindspore/shared_invite/enQtOTcwMTIxMDI3NjM0LTNkMWM2MzI5NjIyZWU5ZWQ5M2EwMTQ5MWNiYzMxOGM4OWFhZjI4M2E5OGI2YTg3ODU1ODE2Njg1MThiNWI3YmQ) - Ask questions and find answers. | |||

| - [MindSpore Slack](https://join.slack.com/t/mindspore/shared_invite/enQtOTcwMTIxMDI3NjM0LTNkMWM2MzI5NjIyZWU5ZWQ5M2EwMTQ5MWNiYzMxOGM4OWFhZjI4M2E5OGI2YTg3ODU1ODE2Njg1MThiNWI3YmQ) - Ask questions and find answers. | |||

| ## Contributing | |||

+ 0

- 130

README_CN.md

View File

| @@ -1,130 +0,0 @@ | |||

| # MindArmour | |||

| <!-- TOC --> | |||

| - [MindArmour](#mindarmour) | |||

| - [简介](#简介) | |||

| - [对抗样本鲁棒性模块](#对抗样本鲁棒性模块) | |||

| - [Fuzz Testing模块](#fuzz-testing模块) | |||

| - [隐私保护模块](#隐私保护模块) | |||

| - [差分隐私训练模块](#差分隐私训练模块) | |||

| - [隐私泄露评估模块](#隐私泄露评估模块) | |||

| - [开始](#开始) | |||

| - [确认系统环境信息](#确认系统环境信息) | |||

| - [安装](#安装) | |||

| - [源码安装](#源码安装) | |||

| - [pip安装](#pip安装) | |||

| - [验证是否成功安装](#验证是否成功安装) | |||

| - [文档](#文档) | |||

| - [社区](#社区) | |||

| - [贡献](#贡献) | |||

| - [版本](#版本) | |||

| - [版权](#版权) | |||

| <!-- /TOC --> | |||

| [View English](./README.md) | |||

| ## 简介 | |||

| MindArmour关注AI的安全和隐私问题。致力于增强模型的安全可信、保护用户的数据隐私。主要包含3个模块:对抗样本鲁棒性模块、Fuzz Testing模块、隐私保护与评估模块。 | |||

| ### 对抗样本鲁棒性模块 | |||

| 对抗样本鲁棒性模块用于评估模型对于对抗样本的鲁棒性,并提供模型增强方法用于增强模型抗对抗样本攻击的能力,提升模型鲁棒性。对抗样本鲁棒性模块包含了4个子模块:对抗样本的生成、对抗样本的检测、模型防御、攻防评估。 | |||

| 对抗样本鲁棒性模块的架构图如下: | |||

|  | |||

| ### Fuzz Testing模块 | |||

| Fuzz Testing模块是针对AI模型的安全测试,根据神经网络的特点,引入神经元覆盖率,作为Fuzz测试的指导,引导Fuzzer朝着神经元覆盖率增加的方向生成样本,让输入能够激活更多的神经元,神经元值的分布范围更广,以充分测试神经网络,探索不同类型的模型输出结果和错误行为。 | |||

| Fuzz Testing模块的架构图如下: | |||

|  | |||

| ### 隐私保护模块 | |||

| 隐私保护模块包含差分隐私训练与隐私泄露评估。 | |||

| #### 差分隐私训练模块 | |||

| 差分隐私训练包括动态或者非动态的差分隐私`SGD`、`Momentum`、`Adam`优化器,噪声机制支持高斯分布噪声、拉普拉斯分布噪声,差分隐私预算监测包含ZCDP、RDP。 | |||

| 差分隐私的架构图如下: | |||

|  | |||

| #### 隐私泄露评估模块 | |||

| 隐私泄露评估模块用于评估模型泄露用户隐私的风险。利用成员推理方法来推测样本是否属于用户训练数据集,从而评估深度学习模型的隐私数据安全。 | |||

| 隐私泄露评估模块框架图如下: | |||

|  | |||

| ## 开始 | |||

| ### 确认系统环境信息 | |||

| - 硬件平台为Ascend、GPU或CPU。 | |||

| - 参考[MindSpore安装指南](https://www.mindspore.cn/install),完成MindSpore的安装。 | |||

| MindArmour与MindSpore的版本需保持一致。 | |||

| - 其余依赖请参见[setup.py](https://gitee.com/mindspore/mindarmour/blob/master/setup.py)。 | |||

| ### 安装 | |||

| #### 源码安装 | |||

| 1. 从Gitee下载源码。 | |||

| ```bash | |||

| git clone https://gitee.com/mindspore/mindarmour.git | |||

| ``` | |||

| 2. 在源码根目录下,执行如下命令编译并安装MindArmour。 | |||

| ```bash | |||

| cd mindarmour | |||

| python setup.py install | |||

| ``` | |||

| #### pip安装 | |||

| ```bash | |||

| pip install https://ms-release.obs.cn-north-4.myhuaweicloud.com/{version}/MindArmour/{arch}/mindarmour-{version}-cp37-cp37m-linux_{arch}.whl --trusted-host ms-release.obs.cn-north-4.myhuaweicloud.com -i https://pypi.tuna.tsinghua.edu.cn/simple | |||

| ``` | |||

| > - 在联网状态下,安装whl包时会自动下载MindArmour安装包的依赖项(依赖项详情参见[setup.py](https://gitee.com/mindspore/mindarmour/blob/master/setup.py)),其余情况需自行安装。 | |||

| > - `{version}`表示MindArmour版本号,例如下载1.0.1版本MindArmour时,`{version}`应写为1.0.1。 | |||

| > - `{arch}`表示系统架构,例如使用的Linux系统是x86架构64位时,`{arch}`应写为`x86_64`。如果系统是ARM架构64位,则写为`aarch64`。 | |||

| ### 验证是否成功安装 | |||

| 执行如下命令,如果没有报错`No module named 'mindarmour'`,则说明安装成功。 | |||

| ```bash | |||

| python -c 'import mindarmour' | |||

| ``` | |||

| ## 文档 | |||

| 安装指导、使用教程、API,请参考[用户文档](https://gitee.com/mindspore/docs)。 | |||

| ## 社区 | |||

| 社区问答:[MindSpore Slack](https://join.slack.com/t/mindspore/shared_invite/enQtOTcwMTIxMDI3NjM0LTNkMWM2MzI5NjIyZWU5ZWQ5M2EwMTQ5MWNiYzMxOGM4OWFhZjI4M2E5OGI2YTg3ODU1ODE2Njg1MThiNWI3YmQ)。 | |||

| ## 贡献 | |||

| 欢迎参与社区贡献,详情参考[Contributor Wiki](https://gitee.com/mindspore/mindspore/blob/master/CONTRIBUTING.md)。 | |||

| ## 版本 | |||

| 版本信息参考:[RELEASE](RELEASE.md)。 | |||

| ## 版权 | |||

| [Apache License 2.0](LICENSE) | |||

+ 5

- 248

RELEASE.md

View File

| @@ -1,254 +1,11 @@ | |||

| # MindArmour 1.2.0 | |||

| ## MindArmour 1.2.0 Release Notes | |||

| ### Major Features and Improvements | |||

| #### Privacy | |||

| * [STABLE]Tailored-based privacy protection technology (Pynative) | |||

| * [STABLE]Model Inversion. Reverse analysis technology of privacy information | |||

| ### API Change | |||

| #### Backwards Incompatible Change | |||

| ##### C++ API | |||

| [Modify] ... | |||

| [Add] ... | |||

| [Delete] ... | |||

| ##### Java API | |||

| [Add] ... | |||

| #### Deprecations | |||

| ##### C++ API | |||

| ##### Java API | |||

| ### Bug fixes | |||

| [BUGFIX] ... | |||

| ### Contributors | |||

| Thanks goes to these wonderful people: | |||

| han.yin | |||

| # MindArmour 1.1.0 Release Notes | |||

| ## MindArmour | |||

| ### Major Features and Improvements | |||

| * [STABLE] Attack capability of the Object Detection models. | |||

| * Some white-box adversarial attacks, such as [iterative] gradient method and DeepFool now can be applied to Object Detection models. | |||

| * Some black-box adversarial attacks, such as PSO and Genetic Attack now can be applied to Object Detection models. | |||

| ### Backwards Incompatible Change | |||

| #### Python API | |||

| #### C++ API | |||

| ### Deprecations | |||

| #### Python API | |||

| #### C++ API | |||

| ### New Features | |||

| #### Python API | |||

| #### C++ API | |||

| ### Improvements | |||

| #### Python API | |||

| #### C++ API | |||

| ### Bug fixes | |||

| #### Python API | |||

| #### C++ API | |||

| ## Contributors | |||

| Thanks goes to these wonderful people: | |||

| Xiulang Jin, Zhidan Liu, Luobin Liu and Liu Liu. | |||

| Contributions of any kind are welcome! | |||

| # Release 1.0.0 | |||

| ## Major Features and Improvements | |||

| ### Differential privacy model training | |||

| * Privacy leakage evaluation. | |||

| * Parameter verification enhancement. | |||

| * Support parallel computing. | |||

| ### Model robustness evaluation | |||

| * Fuzzing based Adversarial Robustness testing. | |||

| * Parameter verification enhancement. | |||

| ### Other | |||

| * Api & Directory Structure | |||

| * Adjusted the directory structure based on different features. | |||

| * Optimize the structure of examples. | |||

| ## Bugfixes | |||

| ## Contributors | |||

| Thanks goes to these wonderful people: | |||

| Liu Liu, Xiulang Jin, Zhidan Liu and Luobin Liu. | |||

| Contributions of any kind are welcome! | |||

| # Release 0.7.0-beta | |||

| ## Major Features and Improvements | |||

| ### Differential privacy model training | |||

| * Privacy leakage evaluation. | |||

| * Using Membership inference to evaluate the effectiveness of privacy-preserving techniques for AI. | |||

| ### Model robustness evaluation | |||

| * Fuzzing based Adversarial Robustness testing. | |||

| * Coverage-guided test set generation. | |||

| ## Bugfixes | |||

| ## Contributors | |||

| Thanks goes to these wonderful people: | |||

| Liu Liu, Xiulang Jin, Zhidan Liu, Luobin Liu and Huanhuan Zheng. | |||

| Contributions of any kind are welcome! | |||

| # Release 0.6.0-beta | |||

| ## Major Features and Improvements | |||

| ### Differential privacy model training | |||

| * Optimizers with differential privacy | |||

| * Differential privacy model training now supports some new policies. | |||

| * Adaptive Norm policy is supported. | |||

| * Adaptive Noise policy with exponential decrease is supported. | |||

| * Differential Privacy Training Monitor | |||

| * A new monitor is supported using zCDP as its asymptotic budget estimator. | |||

| ## Bugfixes | |||

| ## Contributors | |||

| Thanks goes to these wonderful people: | |||

| Liu Liu, Huanhuan Zheng, XiuLang jin, Zhidan liu. | |||

| Contributions of any kind are welcome. | |||

| # Release 0.5.0-beta | |||

| ## Major Features and Improvements | |||

| ### Differential privacy model training | |||

| * Optimizers with differential privacy | |||

| * Differential privacy model training now supports both Pynative mode and graph mode. | |||

| * Graph mode is recommended for its performance. | |||

| ## Bugfixes | |||

| ## Contributors | |||

| Thanks goes to these wonderful people: | |||

| Liu Liu, Huanhuan Zheng, Xiulang Jin, Zhidan Liu. | |||

| Contributions of any kind are welcome! | |||

| # Release 0.3.0-alpha | |||

| ## Major Features and Improvements | |||

| ### Differential Privacy Model Training | |||

| Differential Privacy is coming! By using Differential-Privacy-Optimizers, one can still train a model as usual, while the trained model preserved the privacy of training dataset, satisfying the definition of | |||

| differential privacy with proper budget. | |||

| * Optimizers with Differential Privacy([PR23](https://gitee.com/mindspore/mindarmour/pulls/23), [PR24](https://gitee.com/mindspore/mindarmour/pulls/24)) | |||

| * Some common optimizers now have a differential privacy version (SGD/Adam). We are adding more. | |||

| * Automatically and adaptively add Gaussian Noise during training to achieve Differential Privacy. | |||

| * Automatically stop training when Differential Privacy Budget exceeds. | |||

| * Differential Privacy Monitor([PR22](https://gitee.com/mindspore/mindarmour/pulls/22)) | |||

| * Calculate overall budget consumed during training, indicating the ultimate protect effect. | |||

| ## Bug fixes | |||

| ## Contributors | |||

| Thanks goes to these wonderful people: | |||

| Liu Liu, Huanhuan Zheng, Zhidan Liu, Xiulang Jin | |||

| Contributions of any kind are welcome! | |||

| # Release 0.2.0-alpha | |||

| ## Major Features and Improvements | |||

| * Add a white-box attack method: M-DI2-FGSM([PR14](https://gitee.com/mindspore/mindarmour/pulls/14)). | |||

| * Add three neuron coverage metrics: KMNCov, NBCov, SNACov([PR12](https://gitee.com/mindspore/mindarmour/pulls/12)). | |||

| * Add a coverage-guided fuzzing test framework for deep neural networks([PR13](https://gitee.com/mindspore/mindarmour/pulls/13)). | |||

| * Update the MNIST Lenet5 examples. | |||

| * Remove some duplicate code. | |||

| ## Bug fixes | |||

| ## Contributors | |||

| Thanks goes to these wonderful people: | |||

| Liu Liu, Huanhuan Zheng, Zhidan Liu, Xiulang Jin | |||

| Contributions of any kind are welcome! | |||

| # Release 0.1.0-alpha | |||

| Initial release of MindArmour. | |||

| ## Major Features | |||

| * Support adversarial attack and defense on the platform of MindSpore. | |||

| * Include 13 white-box and 7 black-box attack methods. | |||

| * Provide 5 detection algorithms to detect attacking in multiple way. | |||

| * Provide adversarial training to enhance model security. | |||

| * Provide 6 evaluation metrics for attack methods and 9 evaluation metrics for defense methods. | |||

| - Support adversarial attack and defense on the platform of MindSpore. | |||

| - Include 13 white-box and 7 black-box attack methods. | |||

| - Provide 5 detection algorithms to detect attacking in multiple way. | |||

| - Provide adversarial training to enhance model security. | |||

| - Provide 6 evaluation metrics for attack methods and 9 evaluation metrics for defense methods. | |||

BIN

docs/adversarial_robustness_cn.png

View File

BIN

docs/adversarial_robustness_en.png

View File

BIN

docs/differential_privacy_architecture_cn.png

View File

BIN

docs/differential_privacy_architecture_en.png

View File

BIN

docs/fuzzer_architecture_cn.png

View File

BIN

docs/fuzzer_architecture_en.png

View File

BIN

docs/mindarmour_architecture.png

View File

BIN

docs/privacy_leakage_cn.png

View File

BIN

docs/privacy_leakage_en.png

View File

+ 62

- 0

example/data_processing.py

View File

| @@ -0,0 +1,62 @@ | |||

| # Copyright 2019 Huawei Technologies Co., Ltd | |||

| # | |||

| # Licensed under the Apache License, Version 2.0 (the "License"); | |||

| # you may not use this file except in compliance with the License. | |||

| # You may obtain a copy of the License at | |||

| # | |||

| # http://www.apache.org/licenses/LICENSE-2.0 | |||

| # | |||

| # Unless required by applicable law or agreed to in writing, software | |||

| # distributed under the License is distributed on an "AS IS" BASIS, | |||

| # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. | |||

| # See the License for the specific language governing permissions and | |||

| # limitations under the License. | |||

| import mindspore.dataset as ds | |||

| import mindspore.dataset.transforms.vision.c_transforms as CV | |||

| import mindspore.dataset.transforms.c_transforms as C | |||

| from mindspore.dataset.transforms.vision import Inter | |||

| import mindspore.common.dtype as mstype | |||

| def generate_mnist_dataset(data_path, batch_size=32, repeat_size=1, | |||

| num_parallel_workers=1, sparse=True): | |||

| """ | |||

| create dataset for training or testing | |||

| """ | |||

| # define dataset | |||

| ds1 = ds.MnistDataset(data_path) | |||

| # define operation parameters | |||

| resize_height, resize_width = 32, 32 | |||

| rescale = 1.0 / 255.0 | |||

| shift = 0.0 | |||

| # define map operations | |||

| resize_op = CV.Resize((resize_height, resize_width), | |||

| interpolation=Inter.LINEAR) | |||

| rescale_op = CV.Rescale(rescale, shift) | |||

| hwc2chw_op = CV.HWC2CHW() | |||

| type_cast_op = C.TypeCast(mstype.int32) | |||

| one_hot_enco = C.OneHot(10) | |||

| # apply map operations on images | |||

| if not sparse: | |||

| ds1 = ds1.map(input_columns="label", operations=one_hot_enco, | |||

| num_parallel_workers=num_parallel_workers) | |||

| type_cast_op = C.TypeCast(mstype.float32) | |||

| ds1 = ds1.map(input_columns="label", operations=type_cast_op, | |||

| num_parallel_workers=num_parallel_workers) | |||

| ds1 = ds1.map(input_columns="image", operations=resize_op, | |||

| num_parallel_workers=num_parallel_workers) | |||

| ds1 = ds1.map(input_columns="image", operations=rescale_op, | |||

| num_parallel_workers=num_parallel_workers) | |||

| ds1 = ds1.map(input_columns="image", operations=hwc2chw_op, | |||

| num_parallel_workers=num_parallel_workers) | |||

| # apply DatasetOps | |||

| buffer_size = 10000 | |||

| ds1 = ds1.shuffle(buffer_size=buffer_size) | |||

| ds1 = ds1.batch(batch_size, drop_remainder=True) | |||

| ds1 = ds1.repeat(repeat_size) | |||

| return ds1 | |||

+ 46

- 0

example/mnist_demo/README.md

View File

| @@ -0,0 +1,46 @@ | |||

| # mnist demo | |||

| ## Introduction | |||

| The MNIST database of handwritten digits, available from this page, has a training set of 60,000 examples, and a test set of 10,000 examples. It is a subset of a larger set available from MNIST. The digits have been size-normalized and centered in a fixed-size image. | |||

| ## run demo | |||

| ### 1. download dataset | |||

| ```sh | |||

| $ cd example/mnist_demo | |||

| $ mkdir MNIST_unzip | |||

| $ cd MNIST_unzip | |||

| $ mkdir train | |||

| $ mkdir test | |||

| $ cd train | |||

| $ wget "http://yann.lecun.com/exdb/mnist/train-images-idx3-ubyte.gz" | |||

| $ wget "http://yann.lecun.com/exdb/mnist/train-labels-idx1-ubyte.gz" | |||

| $ gzip train-images-idx3-ubyte.gz -d | |||

| $ gzip train-labels-idx1-ubyte.gz -d | |||

| $ cd ../test | |||

| $ wget "http://yann.lecun.com/exdb/mnist/t10k-images-idx3-ubyte.gz" | |||

| $ wget "http://yann.lecun.com/exdb/mnist/t10k-labels-idx1-ubyte.gz" | |||

| $ gzip t10k-images-idx3-ubyte.gz -d | |||

| $ gzip t10k-images-idx3-ubyte.gz -d | |||

| $ cd ../../ | |||

| ``` | |||

| ### 1. trian model | |||

| ```sh | |||

| $ python mnist_train.py | |||

| ``` | |||

| ### 2. run attack test | |||

| ```sh | |||

| $ mkdir out.data | |||

| $ python mnist_attack_jsma.py | |||

| ``` | |||

| ### 3. run defense/detector test | |||

| ```sh | |||

| $ python mnist_defense_nad.py | |||

| $ python mnist_similarity_detector.py | |||

| ``` | |||

examples/common/networks/lenet5/lenet5_net.py → example/mnist_demo/lenet5_net.py

View File

| @@ -11,7 +11,8 @@ | |||

| # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. | |||

| # See the License for the specific language governing permissions and | |||

| # limitations under the License. | |||

| from mindspore import nn | |||

| import mindspore.nn as nn | |||

| import mindspore.ops.operations as P | |||

| from mindspore.common.initializer import TruncatedNormal | |||

| @@ -29,7 +30,7 @@ def fc_with_initialize(input_channels, out_channels): | |||

| def weight_variable(): | |||

| return TruncatedNormal(0.05) | |||

| return TruncatedNormal(0.2) | |||

| class LeNet5(nn.Cell): | |||

| @@ -45,7 +46,7 @@ class LeNet5(nn.Cell): | |||

| self.fc3 = fc_with_initialize(84, 10) | |||

| self.relu = nn.ReLU() | |||

| self.max_pool2d = nn.MaxPool2d(kernel_size=2, stride=2) | |||

| self.flatten = nn.Flatten() | |||

| self.reshape = P.Reshape() | |||

| def construct(self, x): | |||

| x = self.conv1(x) | |||

| @@ -54,7 +55,7 @@ class LeNet5(nn.Cell): | |||

| x = self.conv2(x) | |||

| x = self.relu(x) | |||

| x = self.max_pool2d(x) | |||

| x = self.flatten(x) | |||

| x = self.reshape(x, (-1, 16*5*5)) | |||

| x = self.fc1(x) | |||

| x = self.relu(x) | |||

| x = self.fc2(x) | |||

examples/model_security/model_attacks/white_box/mnist_attack_cw.py → example/mnist_demo/mnist_attack_cw.py

View File

| @@ -11,8 +11,10 @@ | |||

| # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. | |||

| # See the License for the specific language governing permissions and | |||

| # limitations under the License. | |||

| import sys | |||

| import time | |||

| import numpy as np | |||

| import pytest | |||

| from scipy.special import softmax | |||

| from mindspore import Model | |||

| @@ -20,30 +22,38 @@ from mindspore import Tensor | |||

| from mindspore import context | |||

| from mindspore.train.serialization import load_checkpoint, load_param_into_net | |||

| from mindarmour.adv_robustness.attacks import CarliniWagnerL2Attack | |||

| from mindarmour.adv_robustness.evaluations import AttackEvaluate | |||

| from mindarmour.attacks.carlini_wagner import CarliniWagnerL2Attack | |||

| from mindarmour.utils.logger import LogUtil | |||

| from mindarmour.evaluations.attack_evaluation import AttackEvaluate | |||

| from examples.common.networks.lenet5.lenet5_net import LeNet5 | |||

| from examples.common.dataset.data_processing import generate_mnist_dataset | |||

| from lenet5_net import LeNet5 | |||

| context.set_context(mode=context.GRAPH_MODE, device_target="Ascend") | |||

| sys.path.append("..") | |||

| from data_processing import generate_mnist_dataset | |||

| LOGGER = LogUtil.get_instance() | |||

| LOGGER.set_level('INFO') | |||

| TAG = 'CW_Test' | |||

| @pytest.mark.level1 | |||

| @pytest.mark.platform_arm_ascend_training | |||

| @pytest.mark.platform_x86_ascend_training | |||

| @pytest.mark.env_card | |||

| @pytest.mark.component_mindarmour | |||

| def test_carlini_wagner_attack(): | |||

| """ | |||

| CW-Attack test | |||

| """ | |||

| # upload trained network | |||

| ckpt_path = '../../../common/networks/lenet5/trained_ckpt_file/checkpoint_lenet-10_1875.ckpt' | |||

| ckpt_name = './trained_ckpt_file/checkpoint_lenet-10_1875.ckpt' | |||

| net = LeNet5() | |||

| load_dict = load_checkpoint(ckpt_path) | |||

| load_dict = load_checkpoint(ckpt_name) | |||

| load_param_into_net(net, load_dict) | |||

| # get test data | |||

| data_list = "../../../common/dataset/MNIST/test" | |||

| data_list = "./MNIST_unzip/test" | |||

| batch_size = 32 | |||

| ds = generate_mnist_dataset(data_list, batch_size=batch_size) | |||

| @@ -54,7 +64,7 @@ def test_carlini_wagner_attack(): | |||

| test_labels = [] | |||

| predict_labels = [] | |||

| i = 0 | |||

| for data in ds.create_tuple_iterator(output_numpy=True): | |||

| for data in ds.create_tuple_iterator(): | |||

| i += 1 | |||

| images = data[0].astype(np.float32) | |||

| labels = data[1] | |||

| @@ -105,6 +115,4 @@ def test_carlini_wagner_attack(): | |||

| if __name__ == '__main__': | |||

| # device_target can be "CPU", "GPU" or "Ascend" | |||

| context.set_context(mode=context.GRAPH_MODE, device_target="CPU") | |||

| test_carlini_wagner_attack() | |||

examples/model_security/model_attacks/white_box/mnist_attack_deepfool.py → example/mnist_demo/mnist_attack_deepfool.py

View File

| @@ -11,8 +11,10 @@ | |||

| # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. | |||

| # See the License for the specific language governing permissions and | |||

| # limitations under the License. | |||

| import sys | |||

| import time | |||

| import numpy as np | |||

| import pytest | |||

| from scipy.special import softmax | |||

| from mindspore import Model | |||

| @@ -20,30 +22,39 @@ from mindspore import Tensor | |||

| from mindspore import context | |||

| from mindspore.train.serialization import load_checkpoint, load_param_into_net | |||

| from mindarmour.adv_robustness.attacks.deep_fool import DeepFool | |||

| from mindarmour.adv_robustness.evaluations import AttackEvaluate | |||

| from mindarmour.attacks.deep_fool import DeepFool | |||

| from mindarmour.utils.logger import LogUtil | |||

| from mindarmour.evaluations.attack_evaluation import AttackEvaluate | |||

| from examples.common.networks.lenet5.lenet5_net import LeNet5 | |||

| from examples.common.dataset.data_processing import generate_mnist_dataset | |||

| from lenet5_net import LeNet5 | |||

| context.set_context(mode=context.GRAPH_MODE, device_target="Ascend") | |||

| sys.path.append("..") | |||

| from data_processing import generate_mnist_dataset | |||

| LOGGER = LogUtil.get_instance() | |||

| LOGGER.set_level('INFO') | |||

| TAG = 'DeepFool_Test' | |||

| @pytest.mark.level1 | |||

| @pytest.mark.platform_arm_ascend_training | |||

| @pytest.mark.platform_x86_ascend_training | |||

| @pytest.mark.env_card | |||

| @pytest.mark.component_mindarmour | |||

| def test_deepfool_attack(): | |||

| """ | |||

| DeepFool-Attack test | |||

| """ | |||

| # upload trained network | |||

| ckpt_path = '../../../common/networks/lenet5/trained_ckpt_file/checkpoint_lenet-10_1875.ckpt' | |||

| ckpt_name = './trained_ckpt_file/checkpoint_lenet-10_1875.ckpt' | |||

| net = LeNet5() | |||

| load_dict = load_checkpoint(ckpt_path) | |||

| load_dict = load_checkpoint(ckpt_name) | |||

| load_param_into_net(net, load_dict) | |||

| # get test data | |||

| data_list = "../../../common/dataset/MNIST/test" | |||

| data_list = "./MNIST_unzip/test" | |||

| batch_size = 32 | |||

| ds = generate_mnist_dataset(data_list, batch_size=batch_size) | |||

| @@ -54,7 +65,7 @@ def test_deepfool_attack(): | |||

| test_labels = [] | |||

| predict_labels = [] | |||

| i = 0 | |||

| for data in ds.create_tuple_iterator(output_numpy=True): | |||

| for data in ds.create_tuple_iterator(): | |||

| i += 1 | |||

| images = data[0].astype(np.float32) | |||

| labels = data[1] | |||

| @@ -106,6 +117,4 @@ def test_deepfool_attack(): | |||

| if __name__ == '__main__': | |||

| # device_target can be "CPU", "GPU" or "Ascend" | |||

| context.set_context(mode=context.GRAPH_MODE, device_target="CPU") | |||

| test_deepfool_attack() | |||

examples/model_security/model_attacks/white_box/mnist_attack_fgsm.py → example/mnist_demo/mnist_attack_fgsm.py

View File

| @@ -11,42 +11,53 @@ | |||

| # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. | |||

| # See the License for the specific language governing permissions and | |||

| # limitations under the License. | |||

| import sys | |||

| import time | |||

| import numpy as np | |||

| import pytest | |||

| from scipy.special import softmax | |||

| from mindspore import Model | |||

| from mindspore import Tensor | |||

| from mindspore import context | |||

| from mindspore.train.serialization import load_checkpoint, load_param_into_net | |||

| from mindspore.nn import SoftmaxCrossEntropyWithLogits | |||

| from mindarmour.adv_robustness.attacks import FastGradientSignMethod | |||

| from mindarmour.adv_robustness.evaluations import AttackEvaluate | |||

| from mindarmour.attacks.gradient_method import FastGradientSignMethod | |||

| from mindarmour.utils.logger import LogUtil | |||

| from mindarmour.evaluations.attack_evaluation import AttackEvaluate | |||

| from lenet5_net import LeNet5 | |||

| context.set_context(mode=context.GRAPH_MODE, device_target="Ascend") | |||

| from examples.common.networks.lenet5.lenet5_net import LeNet5 | |||

| from examples.common.dataset.data_processing import generate_mnist_dataset | |||

| sys.path.append("..") | |||

| from data_processing import generate_mnist_dataset | |||

| LOGGER = LogUtil.get_instance() | |||

| LOGGER.set_level('INFO') | |||

| TAG = 'FGSM_Test' | |||

| @pytest.mark.level1 | |||

| @pytest.mark.platform_arm_ascend_training | |||

| @pytest.mark.platform_x86_ascend_training | |||

| @pytest.mark.env_card | |||

| @pytest.mark.component_mindarmour | |||

| def test_fast_gradient_sign_method(): | |||

| """ | |||

| FGSM-Attack test for CPU device. | |||

| FGSM-Attack test | |||

| """ | |||

| # upload trained network | |||

| ckpt_path = '../../../common/networks/lenet5/trained_ckpt_file/checkpoint_lenet-10_1875.ckpt' | |||

| ckpt_name = './trained_ckpt_file/checkpoint_lenet-10_1875.ckpt' | |||

| net = LeNet5() | |||

| load_dict = load_checkpoint(ckpt_path) | |||

| load_dict = load_checkpoint(ckpt_name) | |||

| load_param_into_net(net, load_dict) | |||

| # get test data | |||

| data_list = "../../../common/dataset/MNIST/test" | |||

| data_list = "./MNIST_unzip/test" | |||

| batch_size = 32 | |||

| ds = generate_mnist_dataset(data_list, batch_size) | |||

| ds = generate_mnist_dataset(data_list, batch_size, sparse=False) | |||

| # prediction accuracy before attack | |||

| model = Model(net) | |||

| @@ -55,7 +66,7 @@ def test_fast_gradient_sign_method(): | |||

| test_labels = [] | |||

| predict_labels = [] | |||

| i = 0 | |||

| for data in ds.create_tuple_iterator(output_numpy=True): | |||

| for data in ds.create_tuple_iterator(): | |||

| i += 1 | |||

| images = data[0].astype(np.float32) | |||

| labels = data[1] | |||

| @@ -67,16 +78,15 @@ def test_fast_gradient_sign_method(): | |||

| if i >= batch_num: | |||

| break | |||

| predict_labels = np.concatenate(predict_labels) | |||

| true_labels = np.concatenate(test_labels) | |||

| true_labels = np.argmax(np.concatenate(test_labels), axis=1) | |||

| accuracy = np.mean(np.equal(predict_labels, true_labels)) | |||

| LOGGER.info(TAG, "prediction accuracy before attacking is : %s", accuracy) | |||

| # attacking | |||

| loss = SoftmaxCrossEntropyWithLogits(sparse=True) | |||

| attack = FastGradientSignMethod(net, eps=0.3, loss_fn=loss) | |||

| attack = FastGradientSignMethod(net, eps=0.3) | |||

| start_time = time.clock() | |||

| adv_data = attack.batch_generate(np.concatenate(test_images), | |||

| true_labels, batch_size=32) | |||

| np.concatenate(test_labels), batch_size=32) | |||

| stop_time = time.clock() | |||

| np.save('./adv_data', adv_data) | |||

| pred_logits_adv = model.predict(Tensor(adv_data)).asnumpy() | |||

| @@ -86,7 +96,7 @@ def test_fast_gradient_sign_method(): | |||

| accuracy_adv = np.mean(np.equal(pred_labels_adv, true_labels)) | |||

| LOGGER.info(TAG, "prediction accuracy after attacking is : %s", accuracy_adv) | |||

| attack_evaluate = AttackEvaluate(np.concatenate(test_images).transpose(0, 2, 3, 1), | |||

| np.eye(10)[true_labels], | |||

| np.concatenate(test_labels), | |||

| adv_data.transpose(0, 2, 3, 1), | |||

| pred_logits_adv) | |||

| LOGGER.info(TAG, 'mis-classification rate of adversaries is : %s', | |||

| @@ -106,6 +116,4 @@ def test_fast_gradient_sign_method(): | |||

| if __name__ == '__main__': | |||

| # device_target can be "CPU", "GPU" or "Ascend" | |||

| context.set_context(mode=context.GRAPH_MODE, device_target="CPU") | |||

| test_fast_gradient_sign_method() | |||

examples/model_security/model_attacks/black_box/mnist_attack_genetic.py → example/mnist_demo/mnist_attack_genetic.py

View File

| @@ -11,24 +11,29 @@ | |||

| # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. | |||

| # See the License for the specific language governing permissions and | |||

| # limitations under the License. | |||

| import sys | |||

| import time | |||

| from scipy.special import softmax | |||

| import numpy as np | |||

| import pytest | |||

| from scipy.special import softmax | |||

| from mindspore import Tensor | |||

| from mindspore import context | |||

| from mindspore.train.serialization import load_checkpoint, load_param_into_net | |||

| from mindarmour.adv_robustness.attacks.black.black_model import BlackModel | |||

| from mindarmour.adv_robustness.attacks.black.genetic_attack import GeneticAttack | |||

| from mindarmour.adv_robustness.evaluations import AttackEvaluate | |||

| from mindarmour.attacks.black.genetic_attack import GeneticAttack | |||

| from mindarmour.attacks.black.black_model import BlackModel | |||

| from mindarmour.utils.logger import LogUtil | |||

| from mindarmour.evaluations.attack_evaluation import AttackEvaluate | |||

| from lenet5_net import LeNet5 | |||

| context.set_context(mode=context.GRAPH_MODE, device_target="Ascend") | |||

| from examples.common.dataset.data_processing import generate_mnist_dataset | |||

| from examples.common.networks.lenet5.lenet5_net import LeNet5 | |||

| sys.path.append("..") | |||

| from data_processing import generate_mnist_dataset | |||

| LOGGER = LogUtil.get_instance() | |||

| LOGGER.set_level('INFO') | |||

| TAG = 'Genetic_Attack' | |||

| @@ -41,25 +46,27 @@ class ModelToBeAttacked(BlackModel): | |||

| def predict(self, inputs): | |||

| """predict""" | |||

| # Adapt to the input shape requirements of the target network if inputs is only one image. | |||

| if len(inputs.shape) == 3: | |||

| inputs = np.expand_dims(inputs, axis=0) | |||

| result = self._network(Tensor(inputs.astype(np.float32))) | |||

| return result.asnumpy() | |||

| @pytest.mark.level1 | |||

| @pytest.mark.platform_arm_ascend_training | |||

| @pytest.mark.platform_x86_ascend_training | |||

| @pytest.mark.env_card | |||

| @pytest.mark.component_mindarmour | |||

| def test_genetic_attack_on_mnist(): | |||

| """ | |||

| Genetic-Attack test | |||

| """ | |||

| # upload trained network | |||

| ckpt_path = '../../../common/networks/lenet5/trained_ckpt_file/checkpoint_lenet-10_1875.ckpt' | |||

| ckpt_name = './trained_ckpt_file/checkpoint_lenet-10_1875.ckpt' | |||

| net = LeNet5() | |||

| load_dict = load_checkpoint(ckpt_path) | |||

| load_dict = load_checkpoint(ckpt_name) | |||

| load_param_into_net(net, load_dict) | |||

| # get test data | |||

| data_list = "../../../common/dataset/MNIST/test" | |||

| data_list = "./MNIST_unzip/test" | |||

| batch_size = 32 | |||

| ds = generate_mnist_dataset(data_list, batch_size=batch_size) | |||

| @@ -70,7 +77,7 @@ def test_genetic_attack_on_mnist(): | |||

| test_labels = [] | |||

| predict_labels = [] | |||

| i = 0 | |||

| for data in ds.create_tuple_iterator(output_numpy=True): | |||

| for data in ds.create_tuple_iterator(): | |||

| i += 1 | |||

| images = data[0].astype(np.float32) | |||

| labels = data[1] | |||

| @@ -87,11 +94,11 @@ def test_genetic_attack_on_mnist(): | |||

| # attacking | |||

| attack = GeneticAttack(model=model, pop_size=6, mutation_rate=0.05, | |||

| per_bounds=0.4, step_size=0.25, temp=0.1, | |||

| per_bounds=0.1, step_size=0.25, temp=0.1, | |||

| sparse=True) | |||

| targeted_labels = np.random.randint(0, 10, size=len(true_labels)) | |||

| for i, true_l in enumerate(true_labels): | |||

| if targeted_labels[i] == true_l: | |||

| for i in range(len(true_labels)): | |||

| if targeted_labels[i] == true_labels[i]: | |||

| targeted_labels[i] = (targeted_labels[i] + 1) % 10 | |||

| start_time = time.clock() | |||

| success_list, adv_data, query_list = attack.generate( | |||

| @@ -128,6 +135,4 @@ def test_genetic_attack_on_mnist(): | |||

| if __name__ == '__main__': | |||

| # device_target can be "CPU", "GPU" or "Ascend" | |||

| context.set_context(mode=context.GRAPH_MODE, device_target="CPU") | |||

| test_genetic_attack_on_mnist() | |||

examples/model_security/model_attacks/black_box/mnist_attack_hsja.py → example/mnist_demo/mnist_attack_hsja.py

View File

| @@ -11,21 +11,27 @@ | |||

| # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. | |||

| # See the License for the specific language governing permissions and | |||

| # limitations under the License. | |||

| import sys | |||

| import numpy as np | |||

| import pytest | |||

| from mindspore import Tensor | |||

| from mindspore import context | |||

| from mindspore.train.serialization import load_checkpoint, load_param_into_net | |||

| from mindarmour import BlackModel | |||

| from mindarmour.adv_robustness.attacks import HopSkipJumpAttack | |||

| from mindarmour.attacks.black.hop_skip_jump_attack import HopSkipJumpAttack | |||

| from mindarmour.attacks.black.black_model import BlackModel | |||

| from mindarmour.utils.logger import LogUtil | |||

| from lenet5_net import LeNet5 | |||

| sys.path.append("..") | |||

| from data_processing import generate_mnist_dataset | |||

| from examples.common.dataset.data_processing import generate_mnist_dataset | |||

| from examples.common.networks.lenet5.lenet5_net import LeNet5 | |||

| context.set_context(mode=context.GRAPH_MODE) | |||

| context.set_context(device_target="Ascend") | |||

| LOGGER = LogUtil.get_instance() | |||

| LOGGER.set_level('INFO') | |||

| TAG = 'HopSkipJumpAttack' | |||

| @@ -58,26 +64,31 @@ def random_target_labels(true_labels): | |||

| def create_target_images(dataset, data_labels, target_labels): | |||

| res = [] | |||

| for label in target_labels: | |||

| for data_label, data in zip(data_labels, dataset): | |||

| if data_label == label: | |||

| res.append(data) | |||

| for i in range(len(data_labels)): | |||

| if data_labels[i] == label: | |||

| res.append(dataset[i]) | |||

| break | |||

| return np.array(res) | |||

| @pytest.mark.level1 | |||

| @pytest.mark.platform_arm_ascend_training | |||

| @pytest.mark.platform_x86_ascend_training | |||

| @pytest.mark.env_card | |||

| @pytest.mark.component_mindarmour | |||

| def test_hsja_mnist_attack(): | |||

| """ | |||

| hsja-Attack test | |||

| """ | |||

| # upload trained network | |||

| ckpt_path = '../../../common/networks/lenet5/trained_ckpt_file/checkpoint_lenet-10_1875.ckpt' | |||

| ckpt_name = './trained_ckpt_file/checkpoint_lenet-10_1875.ckpt' | |||

| net = LeNet5() | |||

| load_dict = load_checkpoint(ckpt_path) | |||

| load_dict = load_checkpoint(ckpt_name) | |||

| load_param_into_net(net, load_dict) | |||

| net.set_train(False) | |||

| # get test data | |||

| data_list = "../../../common/dataset/MNIST/test" | |||

| data_list = "./MNIST_unzip/test" | |||

| batch_size = 32 | |||

| ds = generate_mnist_dataset(data_list, batch_size=batch_size) | |||

| @@ -88,7 +99,7 @@ def test_hsja_mnist_attack(): | |||

| test_labels = [] | |||

| predict_labels = [] | |||

| i = 0 | |||

| for data in ds.create_tuple_iterator(output_numpy=True): | |||

| for data in ds.create_tuple_iterator(): | |||

| i += 1 | |||

| images = data[0].astype(np.float32) | |||

| labels = data[1] | |||

| @@ -115,9 +126,9 @@ def test_hsja_mnist_attack(): | |||

| target_images = create_target_images(test_images, predict_labels, | |||

| target_labels) | |||

| attack.set_target_images(target_images) | |||

| success_list, adv_data, _ = attack.generate(test_images, target_labels) | |||

| success_list, adv_data, query_list = attack.generate(test_images, target_labels) | |||

| else: | |||

| success_list, adv_data, _ = attack.generate(test_images, None) | |||

| success_list, adv_data, query_list = attack.generate(test_images, None) | |||

| adv_datas = [] | |||

| gts = [] | |||

| @@ -125,18 +136,15 @@ def test_hsja_mnist_attack(): | |||

| if success: | |||

| adv_datas.append(adv) | |||

| gts.append(gt) | |||

| if gts: | |||

| if len(gts) > 0: | |||

| adv_datas = np.concatenate(np.asarray(adv_datas), axis=0) | |||

| gts = np.asarray(gts) | |||

| pred_logits_adv = model.predict(adv_datas) | |||

| pred_lables_adv = np.argmax(pred_logits_adv, axis=1) | |||

| accuracy_adv = np.mean(np.equal(pred_lables_adv, gts)) | |||

| mis_rate = (1 - accuracy_adv)*(len(adv_datas) / len(success_list)) | |||

| LOGGER.info(TAG, 'mis-classification rate of adversaries is : %s', | |||

| mis_rate) | |||

| accuracy_adv) | |||

| if __name__ == '__main__': | |||

| # device_target can be "CPU", "GPU" or "Ascend" | |||

| context.set_context(mode=context.GRAPH_MODE, device_target="CPU") | |||

| test_hsja_mnist_attack() | |||

examples/model_security/model_attacks/white_box/mnist_attack_jsma.py → example/mnist_demo/mnist_attack_jsma.py

View File

| @@ -11,8 +11,10 @@ | |||

| # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. | |||

| # See the License for the specific language governing permissions and | |||

| # limitations under the License. | |||

| import sys | |||

| import time | |||

| import numpy as np | |||

| import pytest | |||

| from scipy.special import softmax | |||

| from mindspore import Model | |||

| @@ -20,30 +22,39 @@ from mindspore import Tensor | |||

| from mindspore import context | |||

| from mindspore.train.serialization import load_checkpoint, load_param_into_net | |||

| from mindarmour.adv_robustness.attacks import JSMAAttack | |||

| from mindarmour.adv_robustness.evaluations import AttackEvaluate | |||

| from mindarmour.attacks.jsma import JSMAAttack | |||

| from mindarmour.utils.logger import LogUtil | |||

| from mindarmour.evaluations.attack_evaluation import AttackEvaluate | |||

| from examples.common.dataset.data_processing import generate_mnist_dataset | |||

| from examples.common.networks.lenet5.lenet5_net import LeNet5 | |||

| from lenet5_net import LeNet5 | |||

| context.set_context(mode=context.GRAPH_MODE, device_target="Ascend") | |||

| sys.path.append("..") | |||

| from data_processing import generate_mnist_dataset | |||

| LOGGER = LogUtil.get_instance() | |||

| LOGGER.set_level('INFO') | |||

| TAG = 'JSMA_Test' | |||

| @pytest.mark.level1 | |||

| @pytest.mark.platform_arm_ascend_training | |||

| @pytest.mark.platform_x86_ascend_training | |||

| @pytest.mark.env_card | |||

| @pytest.mark.component_mindarmour | |||

| def test_jsma_attack(): | |||

| """ | |||

| JSMA-Attack test | |||

| """ | |||

| # upload trained network | |||

| ckpt_path = '../../../common/networks/lenet5/trained_ckpt_file/checkpoint_lenet-10_1875.ckpt' | |||

| ckpt_name = './trained_ckpt_file/checkpoint_lenet-10_1875.ckpt' | |||

| net = LeNet5() | |||

| load_dict = load_checkpoint(ckpt_path) | |||

| load_dict = load_checkpoint(ckpt_name) | |||

| load_param_into_net(net, load_dict) | |||

| # get test data | |||

| data_list = "../../../common/dataset/MNIST/test" | |||

| data_list = "./MNIST_unzip/test" | |||

| batch_size = 32 | |||

| ds = generate_mnist_dataset(data_list, batch_size=batch_size) | |||

| @@ -54,7 +65,7 @@ def test_jsma_attack(): | |||

| test_labels = [] | |||

| predict_labels = [] | |||

| i = 0 | |||

| for data in ds.create_tuple_iterator(output_numpy=True): | |||

| for data in ds.create_tuple_iterator(): | |||

| i += 1 | |||

| images = data[0].astype(np.float32) | |||

| labels = data[1] | |||

| @@ -68,8 +79,8 @@ def test_jsma_attack(): | |||

| predict_labels = np.concatenate(predict_labels) | |||

| true_labels = np.concatenate(test_labels) | |||

| targeted_labels = np.random.randint(0, 10, size=len(true_labels)) | |||

| for i, true_l in enumerate(true_labels): | |||

| if targeted_labels[i] == true_l: | |||

| for i in range(len(true_labels)): | |||

| if targeted_labels[i] == true_labels[i]: | |||

| targeted_labels[i] = (targeted_labels[i] + 1) % 10 | |||

| accuracy = np.mean(np.equal(predict_labels, true_labels)) | |||

| LOGGER.info(TAG, "prediction accuracy before attacking is : %g", accuracy) | |||

| @@ -110,6 +121,4 @@ def test_jsma_attack(): | |||

| if __name__ == '__main__': | |||

| # device_target can be "CPU", "GPU" or "Ascend" | |||

| context.set_context(mode=context.GRAPH_MODE, device_target="CPU") | |||

| test_jsma_attack() | |||

examples/model_security/model_attacks/white_box/mnist_attack_lbfgs.py → example/mnist_demo/mnist_attack_lbfgs.py

View File

| @@ -11,42 +11,52 @@ | |||

| # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. | |||

| # See the License for the specific language governing permissions and | |||

| # limitations under the License. | |||

| import sys | |||

| import time | |||

| import numpy as np | |||

| import pytest | |||

| from scipy.special import softmax | |||

| from mindspore import Model | |||

| from mindspore import Tensor | |||

| from mindspore import context | |||

| from mindspore.train.serialization import load_checkpoint, load_param_into_net | |||

| from mindspore.nn import SoftmaxCrossEntropyWithLogits | |||

| from mindarmour.adv_robustness.attacks import LBFGS | |||

| from mindarmour.adv_robustness.evaluations import AttackEvaluate | |||

| from mindarmour.attacks.lbfgs import LBFGS | |||

| from mindarmour.utils.logger import LogUtil | |||

| from mindarmour.evaluations.attack_evaluation import AttackEvaluate | |||

| from examples.common.networks.lenet5.lenet5_net import LeNet5 | |||

| from examples.common.dataset.data_processing import generate_mnist_dataset | |||

| from lenet5_net import LeNet5 | |||

| context.set_context(mode=context.GRAPH_MODE, device_target="Ascend") | |||

| sys.path.append("..") | |||

| from data_processing import generate_mnist_dataset | |||

| LOGGER = LogUtil.get_instance() | |||

| LOGGER.set_level('INFO') | |||

| TAG = 'LBFGS_Test' | |||

| @pytest.mark.level1 | |||

| @pytest.mark.platform_arm_ascend_training | |||

| @pytest.mark.platform_x86_ascend_training | |||

| @pytest.mark.env_card | |||

| @pytest.mark.component_mindarmour | |||

| def test_lbfgs_attack(): | |||

| """ | |||

| LBFGS-Attack test for CPU device. | |||

| LBFGS-Attack test | |||

| """ | |||

| # upload trained network | |||

| ckpt_path = '../../../common/networks/lenet5/trained_ckpt_file/checkpoint_lenet-10_1875.ckpt' | |||

| ckpt_name = './trained_ckpt_file/checkpoint_lenet-10_1875.ckpt' | |||

| net = LeNet5() | |||

| load_dict = load_checkpoint(ckpt_path) | |||

| load_dict = load_checkpoint(ckpt_name) | |||

| load_param_into_net(net, load_dict) | |||

| # get test data | |||

| data_list = "../../../common/dataset/MNIST/test" | |||

| data_list = "./MNIST_unzip/test" | |||

| batch_size = 32 | |||

| ds = generate_mnist_dataset(data_list, batch_size=batch_size) | |||

| ds = generate_mnist_dataset(data_list, batch_size=batch_size, sparse=False) | |||

| # prediction accuracy before attack | |||

| model = Model(net) | |||

| @@ -55,7 +65,7 @@ def test_lbfgs_attack(): | |||

| test_labels = [] | |||

| predict_labels = [] | |||

| i = 0 | |||

| for data in ds.create_tuple_iterator(output_numpy=True): | |||

| for data in ds.create_tuple_iterator(): | |||

| i += 1 | |||

| images = data[0].astype(np.float32) | |||

| labels = data[1] | |||

| @@ -67,7 +77,7 @@ def test_lbfgs_attack(): | |||

| if i >= batch_num: | |||

| break | |||

| predict_labels = np.concatenate(predict_labels) | |||

| true_labels = np.concatenate(test_labels) | |||

| true_labels = np.argmax(np.concatenate(test_labels), axis=1) | |||

| accuracy = np.mean(np.equal(predict_labels, true_labels)) | |||

| LOGGER.info(TAG, "prediction accuracy before attacking is : %s", accuracy) | |||

| @@ -75,13 +85,13 @@ def test_lbfgs_attack(): | |||

| is_targeted = True | |||

| if is_targeted: | |||

| targeted_labels = np.random.randint(0, 10, size=len(true_labels)).astype(np.int32) | |||

| for i, true_l in enumerate(true_labels): | |||

| if targeted_labels[i] == true_l: | |||

| for i in range(len(true_labels)): | |||

| if targeted_labels[i] == true_labels[i]: | |||

| targeted_labels[i] = (targeted_labels[i] + 1) % 10 | |||

| else: | |||

| targeted_labels = true_labels.astype(np.int32) | |||

| loss = SoftmaxCrossEntropyWithLogits(sparse=True) | |||

| attack = LBFGS(net, is_targeted=is_targeted, loss_fn=loss) | |||

| targeted_labels = np.eye(10)[targeted_labels].astype(np.float32) | |||

| attack = LBFGS(net, is_targeted=is_targeted) | |||

| start_time = time.clock() | |||

| adv_data = attack.batch_generate(np.concatenate(test_images), | |||

| targeted_labels, | |||

| @@ -96,11 +106,12 @@ def test_lbfgs_attack(): | |||

| LOGGER.info(TAG, "prediction accuracy after attacking is : %s", | |||

| accuracy_adv) | |||

| attack_evaluate = AttackEvaluate(np.concatenate(test_images).transpose(0, 2, 3, 1), | |||

| np.eye(10)[true_labels], | |||

| np.concatenate(test_labels), | |||

| adv_data.transpose(0, 2, 3, 1), | |||

| pred_logits_adv, | |||

| targeted=is_targeted, | |||

| target_label=targeted_labels) | |||

| target_label=np.argmax(targeted_labels, | |||

| axis=1)) | |||

| LOGGER.info(TAG, 'mis-classification rate of adversaries is : %s', | |||

| attack_evaluate.mis_classification_rate()) | |||

| LOGGER.info(TAG, 'The average confidence of adversarial class is : %s', | |||

| @@ -118,6 +129,4 @@ def test_lbfgs_attack(): | |||

| if __name__ == '__main__': | |||

| # device_target can be "CPU", "GPU" or "Ascend" | |||

| context.set_context(mode=context.GRAPH_MODE, device_target="CPU") | |||

| test_lbfgs_attack() | |||

examples/model_security/model_attacks/black_box/mnist_attack_nes.py → example/mnist_demo/mnist_attack_nes.py

View File

| @@ -11,21 +11,27 @@ | |||

| # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. | |||

| # See the License for the specific language governing permissions and | |||

| # limitations under the License. | |||

| import sys | |||

| import numpy as np | |||

| import pytest | |||

| from mindspore import Tensor | |||

| from mindspore import context | |||

| from mindspore.train.serialization import load_checkpoint, load_param_into_net | |||

| from mindarmour import BlackModel | |||

| from mindarmour.adv_robustness.attacks import NES | |||

| from mindarmour.attacks.black.natural_evolutionary_strategy import NES | |||

| from mindarmour.attacks.black.black_model import BlackModel | |||

| from mindarmour.utils.logger import LogUtil | |||

| from lenet5_net import LeNet5 | |||

| sys.path.append("..") | |||

| from data_processing import generate_mnist_dataset | |||

| from examples.common.dataset.data_processing import generate_mnist_dataset | |||

| from examples.common.networks.lenet5.lenet5_net import LeNet5 | |||

| context.set_context(mode=context.GRAPH_MODE) | |||

| context.set_context(device_target="Ascend") | |||

| LOGGER = LogUtil.get_instance() | |||

| LOGGER.set_level('INFO') | |||

| TAG = 'HopSkipJumpAttack' | |||

| @@ -67,26 +73,31 @@ def _pseudorandom_target(index, total_indices, true_class): | |||

| def create_target_images(dataset, data_labels, target_labels): | |||

| res = [] | |||

| for label in target_labels: | |||

| for data_label, data in zip(data_labels, dataset): | |||

| if data_label == label: | |||

| res.append(data) | |||

| for i in range(len(data_labels)): | |||

| if data_labels[i] == label: | |||

| res.append(dataset[i]) | |||

| break | |||

| return np.array(res) | |||

| @pytest.mark.level1 | |||

| @pytest.mark.platform_arm_ascend_training | |||

| @pytest.mark.platform_x86_ascend_training | |||

| @pytest.mark.env_card | |||

| @pytest.mark.component_mindarmour | |||

| def test_nes_mnist_attack(): | |||

| """ | |||

| hsja-Attack test | |||

| """ | |||

| # upload trained network | |||

| ckpt_path = '../../../common/networks/lenet5/trained_ckpt_file/checkpoint_lenet-10_1875.ckpt' | |||

| ckpt_name = './trained_ckpt_file/checkpoint_lenet-10_1875.ckpt' | |||

| net = LeNet5() | |||

| load_dict = load_checkpoint(ckpt_path) | |||

| load_dict = load_checkpoint(ckpt_name) | |||

| load_param_into_net(net, load_dict) | |||

| net.set_train(False) | |||

| # get test data | |||

| data_list = "../../../common/dataset/MNIST/test" | |||

| data_list = "./MNIST_unzip/test" | |||

| batch_size = 32 | |||

| ds = generate_mnist_dataset(data_list, batch_size=batch_size) | |||

| @@ -98,7 +109,7 @@ def test_nes_mnist_attack(): | |||

| test_labels = [] | |||

| predict_labels = [] | |||

| i = 0 | |||

| for data in ds.create_tuple_iterator(output_numpy=True): | |||

| for data in ds.create_tuple_iterator(): | |||

| i += 1 | |||

| images = data[0].astype(np.float32) | |||

| labels = data[1] | |||

| @@ -140,7 +151,7 @@ def test_nes_mnist_attack(): | |||

| target_image = create_target_images(test_images, true_labels, | |||

| target_class) | |||

| nes_instance.set_target_images(target_image) | |||

| tag, adv, queries = nes_instance.generate(np.array(initial_img), np.array(target_class)) | |||

| tag, adv, queries = nes_instance.generate(initial_img, target_class) | |||

| if tag[0]: | |||

| success += 1 | |||

| queries_num += queries[0] | |||

| @@ -154,6 +165,4 @@ def test_nes_mnist_attack(): | |||

| if __name__ == '__main__': | |||

| # device_target can be "CPU", "GPU" or "Ascend" | |||

| context.set_context(mode=context.GRAPH_MODE, device_target="CPU") | |||

| test_nes_mnist_attack() | |||

examples/model_security/model_attacks/white_box/mnist_attack_pgd.py → example/mnist_demo/mnist_attack_pgd.py

View File

| @@ -11,42 +11,53 @@ | |||

| # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. | |||

| # See the License for the specific language governing permissions and | |||

| # limitations under the License. | |||

| import sys | |||

| import time | |||

| import numpy as np | |||

| import pytest | |||

| from scipy.special import softmax | |||

| from mindspore import Model | |||

| from mindspore import Tensor | |||

| from mindspore import context | |||

| from mindspore.train.serialization import load_checkpoint, load_param_into_net | |||

| from mindspore.nn import SoftmaxCrossEntropyWithLogits | |||

| from mindarmour.adv_robustness.attacks import ProjectedGradientDescent | |||

| from mindarmour.adv_robustness.evaluations import AttackEvaluate | |||

| from mindarmour.attacks.iterative_gradient_method import ProjectedGradientDescent | |||

| from mindarmour.utils.logger import LogUtil | |||

| from mindarmour.evaluations.attack_evaluation import AttackEvaluate | |||

| from lenet5_net import LeNet5 | |||

| context.set_context(mode=context.GRAPH_MODE, device_target="Ascend") | |||

| from examples.common.dataset.data_processing import generate_mnist_dataset | |||

| from examples.common.networks.lenet5.lenet5_net import LeNet5 | |||

| sys.path.append("..") | |||

| from data_processing import generate_mnist_dataset | |||

| LOGGER = LogUtil.get_instance() | |||

| LOGGER.set_level('INFO') | |||

| TAG = 'PGD_Test' | |||

| @pytest.mark.level1 | |||

| @pytest.mark.platform_arm_ascend_training | |||

| @pytest.mark.platform_x86_ascend_training | |||

| @pytest.mark.env_card | |||

| @pytest.mark.component_mindarmour | |||

| def test_projected_gradient_descent_method(): | |||

| """ | |||

| PGD-Attack test for CPU device. | |||

| PGD-Attack test | |||

| """ | |||

| # upload trained network | |||

| ckpt_path = '../../../common/networks/lenet5/trained_ckpt_file/checkpoint_lenet-10_1875.ckpt' | |||

| ckpt_name = './trained_ckpt_file/checkpoint_lenet-10_1875.ckpt' | |||

| net = LeNet5() | |||

| load_dict = load_checkpoint(ckpt_path) | |||

| load_dict = load_checkpoint(ckpt_name) | |||

| load_param_into_net(net, load_dict) | |||

| # get test data | |||

| data_list = "../../../common/dataset/MNIST/test" | |||

| data_list = "./MNIST_unzip/test" | |||

| batch_size = 32 | |||

| ds = generate_mnist_dataset(data_list, batch_size) | |||

| ds = generate_mnist_dataset(data_list, batch_size, sparse=False) | |||

| # prediction accuracy before attack | |||

| model = Model(net) | |||

| @@ -55,7 +66,7 @@ def test_projected_gradient_descent_method(): | |||

| test_labels = [] | |||

| predict_labels = [] | |||

| i = 0 | |||

| for data in ds.create_tuple_iterator(output_numpy=True): | |||

| for data in ds.create_tuple_iterator(): | |||

| i += 1 | |||

| images = data[0].astype(np.float32) | |||

| labels = data[1] | |||

| @@ -67,17 +78,16 @@ def test_projected_gradient_descent_method(): | |||

| if i >= batch_num: | |||

| break | |||

| predict_labels = np.concatenate(predict_labels) | |||

| true_labels = np.concatenate(test_labels) | |||

| true_labels = np.argmax(np.concatenate(test_labels), axis=1) | |||

| accuracy = np.mean(np.equal(predict_labels, true_labels)) | |||